Search Results for author: Shizhong Han

Found 19 papers, 4 papers with code

FutureDepth: Learning to Predict the Future Improves Video Depth Estimation

no code implementations • 19 Mar 2024 • Rajeev Yasarla, Manish Kumar Singh, Hong Cai, Yunxiao Shi, Jisoo Jeong, Yinhao Zhu, Shizhong Han, Risheek Garrepalli, Fatih Porikli

In this paper, we propose a novel video depth estimation approach, FutureDepth, which enables the model to implicitly leverage multi-frame and motion cues to improve depth estimation by making it learn to predict the future at training.

Ranked #2 on

Monocular Depth Estimation

on KITTI Eigen split

Ranked #2 on

Monocular Depth Estimation

on KITTI Eigen split

OpenShape: Scaling Up 3D Shape Representation Towards Open-World Understanding

1 code implementation • NeurIPS 2023 • Minghua Liu, Ruoxi Shi, Kaiming Kuang, Yinhao Zhu, Xuanlin Li, Shizhong Han, Hong Cai, Fatih Porikli, Hao Su

Due to their alignment with CLIP embeddings, our learned shape representations can also be integrated with off-the-shelf CLIP-based models for various applications, such as point cloud captioning and point cloud-conditioned image generation.

Ranked #5 on

Zero-Shot Transfer 3D Point Cloud Classification

on ModelNet40

(using extra training data)

Ranked #5 on

Zero-Shot Transfer 3D Point Cloud Classification

on ModelNet40

(using extra training data)

4D Panoptic Segmentation as Invariant and Equivariant Field Prediction

no code implementations • ICCV 2023 • Minghan Zhu, Shizhong Han, Hong Cai, Shubhankar Borse, Maani Ghaffari, Fatih Porikli

In this paper, we develop rotation-equivariant neural networks for 4D panoptic segmentation.

PartSLIP: Low-Shot Part Segmentation for 3D Point Clouds via Pretrained Image-Language Models

2 code implementations • CVPR 2023 • Minghua Liu, Yinhao Zhu, Hong Cai, Shizhong Han, Zhan Ling, Fatih Porikli, Hao Su

Generalizable 3D part segmentation is important but challenging in vision and robotics.

Volumetric Attention for 3D Medical Image Segmentation and Detection

no code implementations • 4 Apr 2020 • Xudong Wang, Shizhong Han, Yunqiang Chen, Dashan Gao, Nuno Vasconcelos

A volumetric attention(VA) module for 3D medical image segmentation and detection is proposed.

Finding novelty with uncertainty

2 code implementations • 11 Feb 2020 • Jacob C. Reinhold, Yufan He, Shizhong Han, Yunqiang Chen, Dashan Gao, Junghoon Lee, Jerry L. Prince, Aaron Carass

Medical images are often used to detect and characterize pathology and disease; however, automatically identifying and segmenting pathology in medical images is challenging because the appearance of pathology across diseases varies widely.

Validating uncertainty in medical image translation

1 code implementation • 11 Feb 2020 • Jacob C. Reinhold, Yufan He, Shizhong Han, Yunqiang Chen, Dashan Gao, Junghoon Lee, Jerry L. Prince, Aaron Carass

Medical images are increasingly used as input to deep neural networks to produce quantitative values that aid researchers and clinicians.

Outlier Guided Optimization of Abdominal Segmentation

no code implementations • 10 Feb 2020 • Yuchen Xu, Olivia Tang, Yucheng Tang, Ho Hin Lee, Yunqiang Chen, Dashan Gao, Shizhong Han, Riqiang Gao, Michael R. Savona, Richard G. Abramson, Yuankai Huo, Bennett A. Landman

We built on a pre-trained 3D U-Net model for abdominal multi-organ segmentation and augmented the dataset either with outlier data (e. g., exemplars for which the baseline algorithm failed) or inliers (e. g., exemplars for which the baseline algorithm worked).

Validation and Optimization of Multi-Organ Segmentation on Clinical Imaging Archives

no code implementations • 10 Feb 2020 • Yuchen Xu, Olivia Tang, Yucheng Tang, Ho Hin Lee, Yunqiang Chen, Dashan Gao, Shizhong Han, Riqiang Gao, Michael R. Savona, Richard G. Abramson, Yuankai Huo, Bennett A. Landman

A 2015 MICCAI challenge spurred substantial innovation in multi-organ abdominal CT segmentation with both traditional and deep learning methods.

Stochastic tissue window normalization of deep learning on computed tomography

no code implementations • 1 Dec 2019 • Yuankai Huo, Yucheng Tang, Yunqiang Chen, Dashan Gao, Shizhong Han, Shunxing Bao, Smita De, James G. Terry, Jeffrey J. Carr, Richard G. Abramson, Bennett A. Landman

We evaluate the effectiveness of both with and without using soft tissue window normalization on multisite CT cohorts.

Contrast Phase Classification with a Generative Adversarial Network

no code implementations • 14 Nov 2019 • Yucheng Tang, Ho Hin Lee, Yuchen Xu, Olivia Tang, Yunqiang Chen, Dashan Gao, Shizhong Han, Riqiang Gao, Camilo Bermudez, Michael R. Savona, Richard G. Abramson, Yuankai Huo, Bennett A. Landman

Dynamic contrast enhanced computed tomography (CT) is an imaging technique that provides critical information on the relationship of vascular structure and dynamics in the context of underlying anatomy.

Semi-Supervised Multi-Organ Segmentation through Quality Assurance Supervision

no code implementations • 12 Nov 2019 • Ho Hin Lee, Yucheng Tang, Olivia Tang, Yuchen Xu, Yunqiang Chen, Dashan Gao, Shizhong Han, Riqiang Gao, Michael R. Savona, Richard G. Abramson, Yuankai Huo, Bennett A. Landman

The contributions of the proposed method are threefold: We show that (1) the QA scores can be used as a loss function to perform semi-supervised learning for unlabeled data, (2) the well trained discriminator is learnt by QA score rather than traditional true/false, and (3) the performance of multi-organ segmentation on unlabeled datasets can be fine-tuned with more robust and higher accuracy than the original baseline method.

Feature-level and Model-level Audiovisual Fusion for Emotion Recognition in the Wild

no code implementations • 6 Jun 2019 • Jie Cai, Zibo Meng, Ahmed Shehab Khan, Zhiyuan Li, James O'Reilly, Shizhong Han, Ping Liu, Min Chen, Yan Tong

In this paper, we proposed two strategies to fuse information extracted from different modalities, i. e., audio and visual.

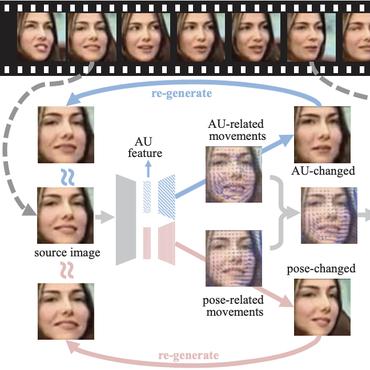

Identity-Free Facial Expression Recognition using conditional Generative Adversarial Network

no code implementations • 19 Mar 2019 • Jie Cai, Zibo Meng, Ahmed Shehab Khan, Zhiyuan Li, James O'Reilly, Shizhong Han, Yan Tong

A novel Identity-Free conditional Generative Adversarial Network (IF-GAN) was proposed for Facial Expression Recognition (FER) to explicitly reduce high inter-subject variations caused by identity-related facial attributes, e. g., age, race, and gender.

Facial Expression Recognition

Facial Expression Recognition

Facial Expression Recognition (FER)

+1

Facial Expression Recognition (FER)

+1

Optimizing Filter Size in Convolutional Neural Networks for Facial Action Unit Recognition

no code implementations • CVPR 2018 • Shizhong Han, Zibo Meng, Zhiyuan Li, James O'Reilly, Jie Cai, Xiao-Feng Wang, Yan Tong

Most recently, Convolutional Neural Networks (CNNs) have shown promise for facial AU recognition, where predefined and fixed convolution filter sizes are employed.

Incremental Boosting Convolutional Neural Network for Facial Action Unit Recognition

no code implementations • NeurIPS 2016 • Shizhong Han, Zibo Meng, Ahmed Shehab Khan, Yan Tong

Experimental results on four benchmark AU databases have demonstrated that the IB-CNN yields significant improvement over the traditional CNN and the boosting CNN without incremental learning, as well as outperforming the state-of-the-art CNN-based methods in AU recognition.

Improving Speech Related Facial Action Unit Recognition by Audiovisual Information Fusion

no code implementations • 29 Jun 2017 • Zibo Meng, Shizhong Han, Ping Liu, Yan Tong

Instead of solely improving visual observations, this paper presents a novel audiovisual fusion framework, which makes the best use of visual and acoustic cues in recognizing speech-related facial AUs.

Listen to Your Face: Inferring Facial Action Units from Audio Channel

no code implementations • 23 Jun 2017 • Zibo Meng, Shizhong Han, Yan Tong

Different from all prior work that utilized visual observations for facial AU recognition, this paper presents a novel approach that recognizes speech-related AUs exclusively from audio signals based on the fact that facial activities are highly correlated with voice during speech.

Facial Expression Recognition via a Boosted Deep Belief Network

no code implementations • CVPR 2014 • Ping Liu, Shizhong Han, Zibo Meng, Yan Tong

A training process for facial expression recognition is usually performed sequentially in three individual stages: feature learning, feature selection, and classifier construction.

Facial Expression Recognition

Facial Expression Recognition

Facial Expression Recognition (FER)

+1

Facial Expression Recognition (FER)

+1