Search Results for author: Shaun Canavan

Found 11 papers, 0 papers with code

Random Forest Regression for continuous affect using Facial Action Units

no code implementations • 24 Mar 2022 • Saurabh Hinduja, Shaun Canavan, Liza Jivnani, Sk Rahatul Jannat, V Sri Chakra Kumar

In this paper we describe our approach to the arousal and valence track of the 3rd Workshop and Competition on Affective Behavior Analysis in-the-wild (ABAW).

Quantified Facial Expressiveness for Affective Behavior Analytics

no code implementations • 5 Oct 2021 • Md Taufeeq Uddin, Shaun Canavan

The quantified measurement of facial expressiveness is crucial to analyze human affective behavior at scale.

Accounting for Affect in Pain Level Recognition

no code implementations • 15 Nov 2020 • Md Taufeeq Uddin, Shaun Canavan, Ghada Zamzmi

In this work, we address the importance of affect in automated pain assessment and the implications in real-world settings.

Quantified Facial Temporal-Expressiveness Dynamics for Affect Analysis

no code implementations • 28 Oct 2020 • Md Taufeeq Uddin, Shaun Canavan

The quantification of visual affect data (e. g. face images) is essential to build and monitor automated affect modeling systems efficiently.

Impact of Action Unit Occurrence Patterns on Detection

no code implementations • 15 Oct 2020 • Saurabh Hinduja, Shaun Canavan, Saandeep Aathreya

In this paper we investigate the impact of action unit occurrence patterns on detection of action units.

Impact of multiple modalities on emotion recognition: investigation into 3d facial landmarks, action units, and physiological data

no code implementations • 17 May 2020 • Diego Fabiano, Manikandan Jaishanker, Shaun Canavan

Considering this, we present an analysis of 3D facial data, action units, and physiological data as it relates to their impact on emotion recognition.

Subject Identification Across Large Expression Variations Using 3D Facial Landmarks

no code implementations • 17 May 2020 • Sk Rahatul Jannat, Diego Fabiano, Shaun Canavan, Tempestt Neal

Landmark localization is an important first step towards geometric based vision research including subject identification.

Detecting Forged Facial Videos using convolutional neural network

no code implementations • 17 May 2020 • Neilesh Sambhu, Shaun Canavan

In this paper, we propose to detect forged videos, of faces, in online videos.

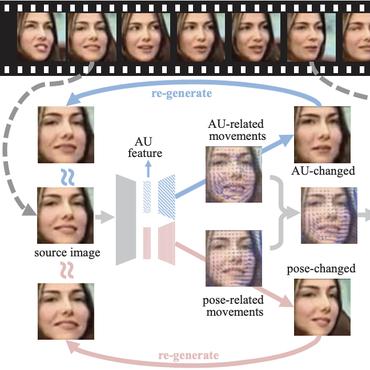

Facial Action Unit Detection using 3D Facial Landmarks

no code implementations • 17 May 2020 • Saurabh Hinduja, Shaun Canavan

In this paper, we propose to detect facial action units (AU) using 3D facial landmarks.

Studying the Impact of Mood on Identifying Smartphone Users

no code implementations • 27 Jun 2019 • Khadija Zanna, Sayde King, Tempestt Neal, Shaun Canavan

This paper explores the identification of smartphone users when certain samples collected while the subject felt happy, upset or stressed were absent or present.

Multimodal Spontaneous Emotion Corpus for Human Behavior Analysis

no code implementations • CVPR 2016 • Zheng Zhang, Jeff M. Girard, Yue Wu, Xing Zhang, Peng Liu, Umur Ciftci, Shaun Canavan, Michael Reale, Andy Horowitz, Huiyuan Yang, Jeffrey F. Cohn, Qiang Ji, Lijun Yin

The corpus further includes derived features from 3D, 2D, and IR (infrared) sensors and baseline results for facial expression and action unit detection.