Search Results for author: Muhammet Bastan

Found 10 papers, 1 papers with code

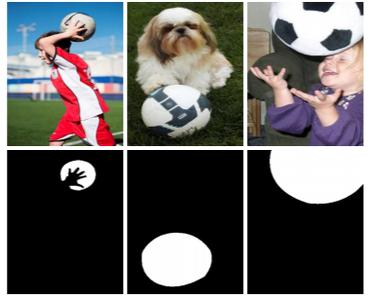

Unsupervised and semi-supervised co-salient object detection via segmentation frequency statistics

no code implementations • 11 Nov 2023 • Souradeep Chakraborty, Shujon Naha, Muhammet Bastan, Amit Kumar K C, Dimitris Samaras

Our unsupervised model is a great pre-training initialization for our semi-supervised model SS-CoSOD, especially when very limited labeled data is available for training.

ProcSim: Proxy-based Confidence for Robust Similarity Learning

no code implementations • 1 Nov 2023 • Oriol Barbany, Xiaofan Lin, Muhammet Bastan, Arnab Dhua

Deep Metric Learning (DML) methods aim at learning an embedding space in which distances are closely related to the inherent semantic similarity of the inputs.

Hierarchical Proxy-based Loss for Deep Metric Learning

no code implementations • 25 Mar 2021 • Zhibo Yang, Muhammet Bastan, Xinliang Zhu, Doug Gray, Dimitris Samaras

In this paper, we present a framework that leverages this implicit hierarchy by imposing a hierarchical structure on the proxies and can be used with any existing proxy-based loss.

T-VSE: Transformer-Based Visual Semantic Embedding

no code implementations • 17 May 2020 • Muhammet Bastan, Arnau Ramisa, Mehmet Tek

Transformer models have recently achieved impressive performance on NLP tasks, owing to new algorithms for self-supervised pre-training on very large text corpora.

Large Scale Open-Set Deep Logo Detection

1 code implementation • 18 Nov 2019 • Muhammet Bastan, Hao-Yu Wu, Tian Cao, Bhargava Kota, Mehmet Tek

We present an open-set logo detection (OSLD) system, which can detect (localize and recognize) any number of unseen logo classes without re-training; it only requires a small set of canonical logo images for each logo class.

Semantic Granularity Metric Learning for Visual Search

no code implementations • 14 Nov 2019 • Dipu Manandhar, Muhammet Bastan, Kim-Hui Yap

In view of this, we propose a new deep semantic granularity metric learning (SGML) that develops a novel idea of leveraging attribute semantic space to capture different granularity of similarity, and then integrate this information into deep metric learning.

Remote Detection of Idling Cars Using Infrared Imaging and Deep Networks

no code implementations • 28 Apr 2018 • Muhammet Bastan, Kim-Hui Yap, Lap-Pui Chau

First, we detect the cars in each IR image using a convolutional neural network, which is pre-trained on regular RGB images and fine-tuned on IR images for higher accuracy.

Active Canny: Edge Detection and Recovery with Open Active Contour Models

no code implementations • 12 Sep 2016 • Muhammet Bastan, S. Saqib Bukhari, Thomas M. Breuel

This way, the strong edges are used to recover weak or missing edges by considering the local edge structures, instead of blindly linking them if gradient magnitudes are above some threshold.

Multi-View Product Image Search Using Deep ConvNets Representations

no code implementations • 11 Aug 2016 • Muhammet Bastan, Ozgur Yilmaz

We concluded that (1) multi-view queries with deep ConvNets representations perform significantly better than single view queries, (2) ConvNets perform much better than BoWs and have room for further improvement, (3) pre-training of ConvNets on a different image dataset with background clutter is needed to obtain good performance on cluttered product image queries obtained with a mobile phone.

Mobile Multi-View Object Image Search

no code implementations • 31 Jul 2015 • Fatih Calisir, Muhammet Bastan, Ozgur Ulusoy, Ugur Gudukbay

High user interaction capability of mobile devices can help improve the accuracy of mobile visual search systems.