Search Results for author: Han Liu

Found 212 papers, 61 papers with code

MMGRec: Multimodal Generative Recommendation with Transformer Model

no code implementations • 25 Apr 2024 • Han Liu, Yinwei Wei, Xuemeng Song, Weili Guan, Yuan-Fang Li, Liqiang Nie

Multimodal recommendation aims to recommend user-preferred candidates based on her/his historically interacted items and associated multimodal information.

PRISM: A Promptable and Robust Interactive Segmentation Model with Visual Prompts

1 code implementation • 23 Apr 2024 • Hao Li, Han Liu, Dewei Hu, Jiacheng Wang, Ipek Oguz

(3) Corrective learning.

EIVEN: Efficient Implicit Attribute Value Extraction using Multimodal LLM

no code implementations • 13 Apr 2024 • Henry Peng Zou, Gavin Heqing Yu, Ziwei Fan, Dan Bu, Han Liu, Peng Dai, Dongmei Jia, Cornelia Caragea

To address these issues, we introduce EIVEN, a data- and parameter-efficient generative framework that pioneers the use of multimodal LLM for implicit attribute value extraction.

Nonparametric Modern Hopfield Models

1 code implementation • 5 Apr 2024 • Jerry Yao-Chieh Hu, Bo-Yu Chen, Dennis Wu, Feng Ruan, Han Liu

We present a nonparametric construction for deep learning compatible modern Hopfield models and utilize this framework to debut an efficient variant.

Uniform Memory Retrieval with Larger Capacity for Modern Hopfield Models

1 code implementation • 4 Apr 2024 • Dennis Wu, Jerry Yao-Chieh Hu, Teng-Yun Hsiao, Han Liu

Specifically, we accomplish this by constructing a separation loss $\mathcal{L}_\Phi$ that separates the local minima of kernelized energy by separating stored memory patterns in kernel space.

Outlier-Efficient Hopfield Layers for Large Transformer-Based Models

1 code implementation • 4 Apr 2024 • Jerry Yao-Chieh Hu, Pei-Hsuan Chang, Robin Luo, Hong-Yu Chen, Weijian Li, Wei-Po Wang, Han Liu

Interestingly, this memory model manifests a model-based interpretation of an outlier-efficient attention mechanism ($\text{Softmax}_1$): it is an approximation of the memory retrieval process of $\mathtt{OutEffHop}$.

Ranked #1 on

Quantization

on Wiki-40B

Ranked #1 on

Quantization

on Wiki-40B

BiSHop: Bi-Directional Cellular Learning for Tabular Data with Generalized Sparse Modern Hopfield Model

1 code implementation • 4 Apr 2024 • Chenwei Xu, Yu-Chao Huang, Jerry Yao-Chieh Hu, Weijian Li, Ammar Gilani, Hsi-Sheng Goan, Han Liu

We introduce the \textbf{B}i-Directional \textbf{S}parse \textbf{Hop}field Network (\textbf{BiSHop}), a novel end-to-end framework for deep tabular learning.

Chain-of-Action: Faithful and Multimodal Question Answering through Large Language Models

no code implementations • 26 Mar 2024 • Zhenyu Pan, Haozheng Luo, Manling Li, Han Liu

We present a Chain-of-Action (CoA) framework for multimodal and retrieval-augmented Question-Answering (QA).

USE: Dynamic User Modeling with Stateful Sequence Models

no code implementations • 20 Mar 2024 • Zhihan Zhou, Qixiang Fang, Leonardo Neves, Francesco Barbieri, Yozen Liu, Han Liu, Maarten W. Bos, Ron Dotsch

Furthermore, we introduce a novel training objective named future W-behavior prediction to transcend the limitations of next-token prediction by forecasting a broader horizon of upcoming user behaviors.

On Languaging a Simulation Engine

no code implementations • 26 Feb 2024 • Han Liu, Liantang Li

Language model intelligence is revolutionizing the way we program materials simulations.

AdAdaGrad: Adaptive Batch Size Schemes for Adaptive Gradient Methods

no code implementations • 17 Feb 2024 • Tim Tsz-Kit Lau, Han Liu, Mladen Kolar

The choice of batch sizes in stochastic gradient optimizers is critical for model training.

VQAttack: Transferable Adversarial Attacks on Visual Question Answering via Pre-trained Models

no code implementations • 16 Feb 2024 • Ziyi Yin, Muchao Ye, Tianrong Zhang, Jiaqi Wang, Han Liu, Jinghui Chen, Ting Wang, Fenglong Ma

Correspondingly, we propose a novel VQAttack model, which can iteratively generate both image and text perturbations with the designed modules: the large language model (LLM)-enhanced image attack and the cross-modal joint attack module.

DNABERT-S: Learning Species-Aware DNA Embedding with Genome Foundation Models

1 code implementation • 13 Feb 2024 • Zhihan Zhou, Weimin Wu, Harrison Ho, Jiayi Wang, Lizhen Shi, Ramana V Davuluri, Zhong Wang, Han Liu

To encourage effective embeddings to error-prone long-read DNA sequences, we introduce Manifold Instance Mixup (MI-Mix), a contrastive objective that mixes the hidden representations of DNA sequences at randomly selected layers and trains the model to recognize and differentiate these mixed proportions at the output layer.

On Computational Limits of Modern Hopfield Models: A Fine-Grained Complexity Analysis

no code implementations • 7 Feb 2024 • Jerry Yao-Chieh Hu, Thomas Lin, Zhao Song, Han Liu

Specifically, we establish an upper bound criterion for the norm of input query patterns and memory patterns.

HQA-Attack: Toward High Quality Black-Box Hard-Label Adversarial Attack on Text

1 code implementation • NeurIPS 2023 • Han Liu, Zhi Xu, Xiaotong Zhang, Feng Zhang, Fenglong Ma, Hongyang Chen, Hong Yu, Xianchao Zhang

Black-box hard-label adversarial attack on text is a practical and challenging task, as the text data space is inherently discrete and non-differentiable, and only the predicted label is accessible.

Two Heads Are Better Than One: Integrating Knowledge from Knowledge Graphs and Large Language Models for Entity Alignment

no code implementations • 30 Jan 2024 • Linyao Yang, Hongyang Chen, Xiao Wang, Jing Yang, Fei-Yue Wang, Han Liu

The final prediction of the equivalent entity is derived from the LLM's output.

LLM4Vuln: A Unified Evaluation Framework for Decoupling and Enhancing LLMs' Vulnerability Reasoning

no code implementations • 29 Jan 2024 • Yuqiang Sun, Daoyuan Wu, Yue Xue, Han Liu, Wei Ma, Lyuye Zhang, Miaolei Shi, Yang Liu

Large language models (LLMs) have demonstrated significant poten- tial for many downstream tasks, including those requiring human- level intelligence, such as vulnerability detection.

Automated Fusion of Multimodal Electronic Health Records for Better Medical Predictions

1 code implementation • 20 Jan 2024 • Suhan Cui, Jiaqi Wang, Yuan Zhong, Han Liu, Ting Wang, Fenglong Ma

The widespread adoption of Electronic Health Record (EHR) systems in healthcare institutes has generated vast amounts of medical data, offering significant opportunities for improving healthcare services through deep learning techniques.

Sparse PCA with Oracle Property

no code implementations • NeurIPS 2014 • Quanquan Gu, Zhaoran Wang, Han Liu

In particular, under a weak assumption on the magnitude of the population projection matrix, one estimator within this family exactly recovers the true support with high probability, has exact rank-$k$, and attains a $\sqrt{s/n}$ statistical rate of convergence with $s$ being the subspace sparsity level and $n$ the sample size.

Beyond PID Controllers: PPO with Neuralized PID Policy for Proton Beam Intensity Control in Mu2e

no code implementations • 28 Dec 2023 • Chenwei Xu, Jerry Yao-Chieh Hu, Aakaash Narayanan, Mattson Thieme, Vladimir Nagaslaev, Mark Austin, Jeremy Arnold, Jose Berlioz, Pierrick Hanlet, Aisha Ibrahim, Dennis Nicklaus, Jovan Mitrevski, Jason Michael St. John, Gauri Pradhan, Andrea Saewert, Kiyomi Seiya, Brian Schupbach, Randy Thurman-Keup, Nhan Tran, Rui Shi, Seda Ogrenci, Alexis Maya-Isabelle Shuping, Kyle Hazelwood, Han Liu

We introduce a novel Proximal Policy Optimization (PPO) algorithm aimed at addressing the challenge of maintaining a uniform proton beam intensity delivery in the Muon to Electron Conversion Experiment (Mu2e) at Fermi National Accelerator Laboratory (Fermilab).

STanHop: Sparse Tandem Hopfield Model for Memory-Enhanced Time Series Prediction

1 code implementation • 28 Dec 2023 • Dennis Wu, Jerry Yao-Chieh Hu, Weijian Li, Bo-Yu Chen, Han Liu

We present STanHop-Net (Sparse Tandem Hopfield Network) for multivariate time series prediction with memory-enhanced capabilities.

Learning Site-specific Styles for Multi-institutional Unsupervised Cross-modality Domain Adaptation

1 code implementation • 21 Nov 2023 • Han Liu, Yubo Fan, Zhoubing Xu, Benoit M. Dawant, Ipek Oguz

In this paper, we present our solution to tackle the multi-institutional unsupervised domain adaptation for the crossMoDA 2023 challenge.

Assessing Test-time Variability for Interactive 3D Medical Image Segmentation with Diverse Point Prompts

1 code implementation • 13 Nov 2023 • Hao Li, Han Liu, Dewei Hu, Jiacheng Wang, Ipek Oguz

In this paper, we assess the test-time variability for interactive medical image segmentation with diverse point prompts.

Promise:Prompt-driven 3D Medical Image Segmentation Using Pretrained Image Foundation Models

1 code implementation • 30 Oct 2023 • Hao Li, Han Liu, Dewei Hu, Jiacheng Wang, Ipek Oguz

To address prevalent issues in medical imaging, such as data acquisition challenges and label availability, transfer learning from natural to medical image domains serves as a viable strategy to produce reliable segmentation results.

Boosting Decision-Based Black-Box Adversarial Attack with Gradient Priors

no code implementations • 29 Oct 2023 • Han Liu, Xingshuo Huang, Xiaotong Zhang, Qimai Li, Fenglong Ma, Wei Wang, Hongyang Chen, Hong Yu, Xianchao Zhang

Decision-based methods have shown to be effective in black-box adversarial attacks, as they can obtain satisfactory performance and only require to access the final model prediction.

VLATTACK: Multimodal Adversarial Attacks on Vision-Language Tasks via Pre-trained Models

1 code implementation • NeurIPS 2023 • Ziyi Yin, Muchao Ye, Tianrong Zhang, Tianyu Du, Jinguo Zhu, Han Liu, Jinghui Chen, Ting Wang, Fenglong Ma

In this paper, we aim to investigate a new yet practical task to craft image and text perturbations using pre-trained VL models to attack black-box fine-tuned models on different downstream tasks.

Beyond Reverse KL: Generalizing Direct Preference Optimization with Diverse Divergence Constraints

no code implementations • 28 Sep 2023 • Chaoqi Wang, Yibo Jiang, Chenghao Yang, Han Liu, Yuxin Chen

The increasing capabilities of large language models (LLMs) raise opportunities for artificial general intelligence but concurrently amplify safety concerns, such as potential misuse of AI systems, necessitating effective AI alignment.

On Sparse Modern Hopfield Model

1 code implementation • NeurIPS 2023 • Jerry Yao-Chieh Hu, Donglin Yang, Dennis Wu, Chenwei Xu, Bo-Yu Chen, Han Liu

Building upon this, we derive the sparse memory retrieval dynamics from the sparse energy function and show its one-step approximation is equivalent to the sparse-structured attention.

False Negative/Positive Control for SAM on Noisy Medical Images

1 code implementation • 20 Aug 2023 • Xing Yao, Han Liu, Dewei Hu, Daiwei Lu, Ange Lou, Hao Li, Ruining Deng, Gabriel Arenas, Baris Oguz, Nadav Schwartz, Brett C Byram, Ipek Oguz

The method couples multi-box prompt augmentation and an aleatoric uncertainty-based false-negative (FN) and false-positive (FP) correction (FNPC) strategy.

CATS v2: Hybrid encoders for robust medical segmentation

2 code implementations • 11 Aug 2023 • Hao Li, Han Liu, Dewei Hu, Xing Yao, Jiacheng Wang, Ipek Oguz

We fuse the information from the convolutional encoder and the transformer at the skip connections of different resolutions to form the final segmentation.

GPTScan: Detecting Logic Vulnerabilities in Smart Contracts by Combining GPT with Program Analysis

1 code implementation • 7 Aug 2023 • Yuqiang Sun, Daoyuan Wu, Yue Xue, Han Liu, Haijun Wang, Zhengzi Xu, Xiaofei Xie, Yang Liu

Instead of relying solely on GPT to identify vulnerabilities, which can lead to high false positives and is limited by GPT's pre-trained knowledge, we utilize GPT as a versatile code understanding tool.

COLosSAL: A Benchmark for Cold-start Active Learning for 3D Medical Image Segmentation

1 code implementation • 22 Jul 2023 • Han Liu, Hao Li, Xing Yao, Yubo Fan, Dewei Hu, Benoit Dawant, Vishwesh Nath, Zhoubing Xu, Ipek Oguz

Cold-start AL is highly relevant in many practical scenarios but has been under-explored, especially for 3D medical segmentation tasks requiring substantial annotation effort.

Efficient Action Robust Reinforcement Learning with Probabilistic Policy Execution Uncertainty

no code implementations • 15 Jul 2023 • Guanlin Liu, Zhihan Zhou, Han Liu, Lifeng Lai

Robust reinforcement learning (RL) aims to find a policy that optimizes the worst-case performance in the face of uncertainties.

Learning Multiple Coordinated Agents under Directed Acyclic Graph Constraints

no code implementations • 13 Jul 2023 • Jaeyeon Jang, Diego Klabjan, Han Liu, Nital S. Patel, Xiuqi Li, Balakrishnan Ananthanarayanan, Husam Dauod, Tzung-Han Juang

This paper proposes a novel multi-agent reinforcement learning (MARL) method to learn multiple coordinated agents under directed acyclic graph (DAG) constraints.

Real-time High-Resolution Neural Network with Semantic Guidance for Crack Segmentation

1 code implementation • 1 Jul 2023 • Yongshang Li, Ronggui Ma, Han Liu, Gaoli Cheng

Deep learning plays an important role in crack segmentation, but most work utilize off-the-shelf or improved models that have not been specifically developed for this task.

VesselMorph: Domain-Generalized Retinal Vessel Segmentation via Shape-Aware Representation

no code implementations • 1 Jul 2023 • Dewei Hu, Hao Li, Han Liu, Xing Yao, Jiacheng Wang, Ipek Oguz

We map the intensity image and the tensor field to a latent space for feature extraction.

DNABERT-2: Efficient Foundation Model and Benchmark For Multi-Species Genome

4 code implementations • 26 Jun 2023 • Zhihan Zhou, Yanrong Ji, Weijian Li, Pratik Dutta, Ramana Davuluri, Han Liu

Decoding the linguistic intricacies of the genome is a crucial problem in biology, and pre-trained foundational models such as DNABERT and Nucleotide Transformer have made significant strides in this area.

Ranked #1 on

Core Promoter Detection

on GUE

Ranked #1 on

Core Promoter Detection

on GUE

Feature Programming for Multivariate Time Series Prediction

1 code implementation • 9 Jun 2023 • Alex Reneau, Jerry Yao-Chieh Hu, Chenwei Xu, Weijian Li, Ammar Gilani, Han Liu

We introduce the concept of programmable feature engineering for time series modeling and propose a feature programming framework.

Non-Log-Concave and Nonsmooth Sampling via Langevin Monte Carlo Algorithms

1 code implementation • 25 May 2023 • Tim Tsz-Kit Lau, Han Liu, Thomas Pock

We study the problem of approximate sampling from non-log-concave distributions, e. g., Gaussian mixtures, which is often challenging even in low dimensions due to their multimodality.

COSST: Multi-organ Segmentation with Partially Labeled Datasets Using Comprehensive Supervisions and Self-training

no code implementations • 27 Apr 2023 • Han Liu, Zhoubing Xu, Riqiang Gao, Hao Li, Jianing Wang, Guillaume Chabin, Ipek Oguz, Sasa Grbic

We revisit the problem from a perspective of partial label supervision signals and identify two signals derived from ground truth and one from pseudo labels.

Boosting Few-Shot Text Classification via Distribution Estimation

no code implementations • 26 Mar 2023 • Han Liu, Feng Zhang, Xiaotong Zhang, Siyang Zhao, Fenglong Ma, Xiao-Ming Wu, Hongyang Chen, Hong Yu, Xianchao Zhang

Distribution estimation has been demonstrated as one of the most effective approaches in dealing with few-shot image classification, as the low-level patterns and underlying representations can be easily transferred across different tasks in computer vision domain.

Few-Shot Image Classification

Few-Shot Image Classification

Few-Shot Text Classification

+1

Few-Shot Text Classification

+1

SSL^2: Self-Supervised Learning meets Semi-Supervised Learning: Multiple Sclerosis Segmentation in 7T-MRI from large-scale 3T-MRI

no code implementations • 9 Mar 2023 • Jiacheng Wang, Hao Li, Han Liu, Dewei Hu, Daiwei Lu, Keejin Yoon, Kelsey Barter, Francesca Bagnato, Ipek Oguz

A potential solution is to leverage the information available in large public datasets in conjunction with a target dataset which only has limited labeled data.

Learning Human-Compatible Representations for Case-Based Decision Support

1 code implementation • 6 Mar 2023 • Han Liu, Yizhou Tian, Chacha Chen, Shi Feng, Yuxin Chen, Chenhao Tan

Despite the promising performance of supervised learning, representations learned by supervised models may not align well with human intuitions: what models consider as similar examples can be perceived as distinct by humans.

Real-Time Image Demoireing on Mobile Devices

1 code implementation • 4 Feb 2023 • Yuxin Zhang, Mingbao Lin, Xunchao Li, Han Liu, Guozhi Wang, Fei Chao, Shuai Ren, Yafei Wen, Xiaoxin Chen, Rongrong Ji

In this paper, we launch the first study on accelerating demoireing networks and propose a dynamic demoireing acceleration method (DDA) towards a real-time deployment on mobile devices.

HS-GCN: Hamming Spatial Graph Convolutional Networks for Recommendation

1 code implementation • 13 Jan 2023 • Han Liu, Yinwei Wei, Jianhua Yin, Liqiang Nie

Towards this end, existing methods tend to code users by modeling their Hamming similarities with the items they historically interact with, which are termed as the first-order similarities in this work.

SlowLiDAR: Increasing the Latency of LiDAR-Based Detection Using Adversarial Examples

1 code implementation • CVPR 2023 • Han Liu, Yuhao Wu, Zhiyuan Yu, Yevgeniy Vorobeychik, Ning Zhang

LiDAR-based perception is a central component of autonomous driving, playing a key role in tasks such as vehicle localization and obstacle detection.

RIATIG: Reliable and Imperceptible Adversarial Text-to-Image Generation With Natural Prompts

1 code implementation • CVPR 2023 • Han Liu, Yuhao Wu, Shixuan Zhai, Bo Yuan, Ning Zhang

The field of text-to-image generation has made remarkable strides in creating high-fidelity and photorealistic images.

Biomedical image analysis competitions: The state of current participation practice

no code implementations • 16 Dec 2022 • Matthias Eisenmann, Annika Reinke, Vivienn Weru, Minu Dietlinde Tizabi, Fabian Isensee, Tim J. Adler, Patrick Godau, Veronika Cheplygina, Michal Kozubek, Sharib Ali, Anubha Gupta, Jan Kybic, Alison Noble, Carlos Ortiz de Solórzano, Samiksha Pachade, Caroline Petitjean, Daniel Sage, Donglai Wei, Elizabeth Wilden, Deepak Alapatt, Vincent Andrearczyk, Ujjwal Baid, Spyridon Bakas, Niranjan Balu, Sophia Bano, Vivek Singh Bawa, Jorge Bernal, Sebastian Bodenstedt, Alessandro Casella, Jinwook Choi, Olivier Commowick, Marie Daum, Adrien Depeursinge, Reuben Dorent, Jan Egger, Hannah Eichhorn, Sandy Engelhardt, Melanie Ganz, Gabriel Girard, Lasse Hansen, Mattias Heinrich, Nicholas Heller, Alessa Hering, Arnaud Huaulmé, Hyunjeong Kim, Bennett Landman, Hongwei Bran Li, Jianning Li, Jun Ma, Anne Martel, Carlos Martín-Isla, Bjoern Menze, Chinedu Innocent Nwoye, Valentin Oreiller, Nicolas Padoy, Sarthak Pati, Kelly Payette, Carole Sudre, Kimberlin Van Wijnen, Armine Vardazaryan, Tom Vercauteren, Martin Wagner, Chuanbo Wang, Moi Hoon Yap, Zeyun Yu, Chun Yuan, Maximilian Zenk, Aneeq Zia, David Zimmerer, Rina Bao, Chanyeol Choi, Andrew Cohen, Oleh Dzyubachyk, Adrian Galdran, Tianyuan Gan, Tianqi Guo, Pradyumna Gupta, Mahmood Haithami, Edward Ho, Ikbeom Jang, Zhili Li, Zhengbo Luo, Filip Lux, Sokratis Makrogiannis, Dominik Müller, Young-tack Oh, Subeen Pang, Constantin Pape, Gorkem Polat, Charlotte Rosalie Reed, Kanghyun Ryu, Tim Scherr, Vajira Thambawita, Haoyu Wang, Xinliang Wang, Kele Xu, Hung Yeh, Doyeob Yeo, Yixuan Yuan, Yan Zeng, Xin Zhao, Julian Abbing, Jannes Adam, Nagesh Adluru, Niklas Agethen, Salman Ahmed, Yasmina Al Khalil, Mireia Alenyà, Esa Alhoniemi, Chengyang An, Talha Anwar, Tewodros Weldebirhan Arega, Netanell Avisdris, Dogu Baran Aydogan, Yingbin Bai, Maria Baldeon Calisto, Berke Doga Basaran, Marcel Beetz, Cheng Bian, Hao Bian, Kevin Blansit, Louise Bloch, Robert Bohnsack, Sara Bosticardo, Jack Breen, Mikael Brudfors, Raphael Brüngel, Mariano Cabezas, Alberto Cacciola, Zhiwei Chen, Yucong Chen, Daniel Tianming Chen, Minjeong Cho, Min-Kook Choi, Chuantao Xie Chuantao Xie, Dana Cobzas, Julien Cohen-Adad, Jorge Corral Acero, Sujit Kumar Das, Marcela de Oliveira, Hanqiu Deng, Guiming Dong, Lars Doorenbos, Cory Efird, Sergio Escalera, Di Fan, Mehdi Fatan Serj, Alexandre Fenneteau, Lucas Fidon, Patryk Filipiak, René Finzel, Nuno R. Freitas, Christoph M. Friedrich, Mitchell Fulton, Finn Gaida, Francesco Galati, Christoforos Galazis, Chang Hee Gan, Zheyao Gao, Shengbo Gao, Matej Gazda, Beerend Gerats, Neil Getty, Adam Gibicar, Ryan Gifford, Sajan Gohil, Maria Grammatikopoulou, Daniel Grzech, Orhun Güley, Timo Günnemann, Chunxu Guo, Sylvain Guy, Heonjin Ha, Luyi Han, Il Song Han, Ali Hatamizadeh, Tian He, Jimin Heo, Sebastian Hitziger, SeulGi Hong, Seungbum Hong, Rian Huang, Ziyan Huang, Markus Huellebrand, Stephan Huschauer, Mustaffa Hussain, Tomoo Inubushi, Ece Isik Polat, Mojtaba Jafaritadi, SeongHun Jeong, Bailiang Jian, Yuanhong Jiang, Zhifan Jiang, Yueming Jin, Smriti Joshi, Abdolrahim Kadkhodamohammadi, Reda Abdellah Kamraoui, Inha Kang, Junghwa Kang, Davood Karimi, April Khademi, Muhammad Irfan Khan, Suleiman A. Khan, Rishab Khantwal, Kwang-Ju Kim, Timothy Kline, Satoshi Kondo, Elina Kontio, Adrian Krenzer, Artem Kroviakov, Hugo Kuijf, Satyadwyoom Kumar, Francesco La Rosa, Abhi Lad, Doohee Lee, Minho Lee, Chiara Lena, Hao Li, Ling Li, Xingyu Li, Fuyuan Liao, Kuanlun Liao, Arlindo Limede Oliveira, Chaonan Lin, Shan Lin, Akis Linardos, Marius George Linguraru, Han Liu, Tao Liu, Di Liu, Yanling Liu, João Lourenço-Silva, Jingpei Lu, Jiangshan Lu, Imanol Luengo, Christina B. Lund, Huan Minh Luu, Yi Lv, Uzay Macar, Leon Maechler, Sina Mansour L., Kenji Marshall, Moona Mazher, Richard McKinley, Alfonso Medela, Felix Meissen, Mingyuan Meng, Dylan Miller, Seyed Hossein Mirjahanmardi, Arnab Mishra, Samir Mitha, Hassan Mohy-ud-Din, Tony Chi Wing Mok, Gowtham Krishnan Murugesan, Enamundram Naga Karthik, Sahil Nalawade, Jakub Nalepa, Mohamed Naser, Ramin Nateghi, Hammad Naveed, Quang-Minh Nguyen, Cuong Nguyen Quoc, Brennan Nichyporuk, Bruno Oliveira, David Owen, Jimut Bahan Pal, Junwen Pan, Wentao Pan, Winnie Pang, Bogyu Park, Vivek Pawar, Kamlesh Pawar, Michael Peven, Lena Philipp, Tomasz Pieciak, Szymon Plotka, Marcel Plutat, Fattaneh Pourakpour, Domen Preložnik, Kumaradevan Punithakumar, Abdul Qayyum, Sandro Queirós, Arman Rahmim, Salar Razavi, Jintao Ren, Mina Rezaei, Jonathan Adam Rico, ZunHyan Rieu, Markus Rink, Johannes Roth, Yusely Ruiz-Gonzalez, Numan Saeed, Anindo Saha, Mostafa Salem, Ricardo Sanchez-Matilla, Kurt Schilling, Wei Shao, Zhiqiang Shen, Ruize Shi, Pengcheng Shi, Daniel Sobotka, Théodore Soulier, Bella Specktor Fadida, Danail Stoyanov, Timothy Sum Hon Mun, Xiaowu Sun, Rong Tao, Franz Thaler, Antoine Théberge, Felix Thielke, Helena Torres, Kareem A. Wahid, Jiacheng Wang, Yifei Wang, Wei Wang, Xiong Wang, Jianhui Wen, Ning Wen, Marek Wodzinski, Ye Wu, Fangfang Xia, Tianqi Xiang, Chen Xiaofei, Lizhan Xu, Tingting Xue, Yuxuan Yang, Lin Yang, Kai Yao, Huifeng Yao, Amirsaeed Yazdani, Michael Yip, Hwanseung Yoo, Fereshteh Yousefirizi, Shunkai Yu, Lei Yu, Jonathan Zamora, Ramy Ashraf Zeineldin, Dewen Zeng, Jianpeng Zhang, Bokai Zhang, Jiapeng Zhang, Fan Zhang, Huahong Zhang, Zhongchen Zhao, Zixuan Zhao, Jiachen Zhao, Can Zhao, Qingshuo Zheng, Yuheng Zhi, Ziqi Zhou, Baosheng Zou, Klaus Maier-Hein, Paul F. Jäger, Annette Kopp-Schneider, Lena Maier-Hein

Of these, 84% were based on standard architectures.

Interdisciplinary Discovery of Nanomaterials Based on Convolutional Neural Networks

no code implementations • 6 Dec 2022 • Tong Xie, Yuwei Wan, Weijian Li, Qingyuan Linghu, Shaozhou Wang, Yalun Cai, Han Liu, Chunyu Kit, Clara Grazian, Bram Hoex

The material science literature contains up-to-date and comprehensive scientific knowledge of materials.

KGML-xDTD: A Knowledge Graph-based Machine Learning Framework for Drug Treatment Prediction and Mechanism Description

no code implementations • 30 Nov 2022 • Chunyu Ma, Zhihan Zhou, Han Liu, David Koslicki

We believe it can effectively reduce "black-box" concerns and increase prediction confidence for drug repurposing based on predicted path-based explanations, and further accelerate the process of drug discovery for emerging diseases.

Aligning Offline Metrics and Human Judgments of Value for Code Generation Models

no code implementations • 29 Oct 2022 • Victor Dibia, Adam Fourney, Gagan Bansal, Forough Poursabzi-Sangdeh, Han Liu, Saleema Amershi

Large language models have demonstrated great potential to assist programmers in generating code.

Drug repositioning for Alzheimer's disease with transfer learning

no code implementations • 27 Oct 2022 • Yetao Wu, Han Liu, Jie Yan, Xiaolin Hu

After training, the model is used for virtual screening to find potential drugs for Alzheimer's disease (AD) treatment.

Evaluation of Synthetically Generated CT for use in Transcranial Focused Ultrasound Procedures

1 code implementation • 26 Oct 2022 • Han Liu, Michelle K. Sigona, Thomas J. Manuel, Li Min Chen, Benoit M. Dawant, Charles F. Caskey

Among 20 targets, differences in simulated peak pressure between rCT and sCT were largest without phase correction (12. 4$\pm$8. 1%) and smallest with Kranion phases (7. 3$\pm$6. 0%).

Adaptive Contrastive Learning with Dynamic Correlation for Multi-Phase Organ Segmentation

1 code implementation • 16 Oct 2022 • Ho Hin Lee, Yucheng Tang, Han Liu, Yubo Fan, Leon Y. Cai, Qi Yang, Xin Yu, Shunxing Bao, Yuankai Huo, Bennett A. Landman

We evaluate our proposed approach on multi-organ segmentation with both non-contrast CT (NCCT) datasets and the MICCAI 2015 BTCV Challenge contrast-enhance CT (CECT) datasets.

Enhancing Data Diversity for Self-training Based Unsupervised Cross-modality Vestibular Schwannoma and Cochlea Segmentation

no code implementations • 23 Sep 2022 • Han Liu, Yubo Fan, Ipek Oguz, Benoit M. Dawant

Automatic segmentation of vestibular schwannoma (VS) and cochlea from magnetic resonance imaging can facilitate VS treatment planning.

Cats: Complementary CNN and Transformer Encoders for Segmentation

no code implementations • 24 Aug 2022 • Hao Li, Dewei Hu, Han Liu, Jiacheng Wang, Ipek Oguz

We fuse the information from the convolutional encoder and the transformer, and pass it to the decoder to obtain the results.

HPS-Det: Dynamic Sample Assignment with Hyper-Parameter Search for Object Detection

no code implementations • 23 Jul 2022 • Ji Liu, Dong Li, Zekun Li, Han Liu, Wenjing Ke, Lu Tian, Yi Shan

Sample assignment plays a prominent part in modern object detection approaches.

A Real-time Fire Segmentation Method Based on A Deep Learning Approach

1 code implementation • IFAC-PapersOnLine 2022 • Mengna Li, Youmin Zhang, Lingxia Mu, Jing Xin, Ziquan Yu, Shangbin Jiao, Han Liu, Guo Xie, Yi Yingmin

Different from deeplabv3+, in order to improve the segmentation speed, this paper uses the lightweight network mobilenetv3 to build a new deep convolutional neural network and does not use atrous convolution, but it will affect the segmentation accuracy.

Ranked #1 on

Real-Time Semantic Segmentation

on FLAME

Ranked #1 on

Real-Time Semantic Segmentation

on FLAME

Bregman Proximal Langevin Monte Carlo via Bregman--Moreau Envelopes

1 code implementation • 10 Jul 2022 • Tim Tsz-Kit Lau, Han Liu

The proposed algorithms extend existing Langevin Monte Carlo algorithms in two aspects -- the ability to sample nonsmooth distributions with mirror descent-like algorithms, and the use of the more general Bregman--Moreau envelope in place of the Moreau envelope as a smooth approximation of the nonsmooth part of the potential.

Label-enhanced Prototypical Network with Contrastive Learning for Multi-label Few-shot Aspect Category Detection

no code implementations • 14 Jun 2022 • Han Liu, Feng Zhang, Xiaotong Zhang, Siyang Zhao, Junjie Sun, Hong Yu, Xianchao Zhang

Multi-label aspect category detection allows a given review sentence to contain multiple aspect categories, which is shown to be more practical in sentiment analysis and attracting increasing attention.

Bridging the Gap Between Training and Inference of Bayesian Controllable Language Models

no code implementations • 11 Jun 2022 • Han Liu, Bingning Wang, Ting Yao, Haijin Liang, Jianjin Xu, Xiaolin Hu

Large-scale pre-trained language models have achieved great success on natural language generation tasks.

A Simple Meta-learning Paradigm for Zero-shot Intent Classification with Mixture Attention Mechanism

no code implementations • 5 Jun 2022 • Han Liu, Siyang Zhao, Xiaotong Zhang, Feng Zhang, Junjie Sun, Hong Yu, Xianchao Zhang

Zero-shot intent classification is a vital and challenging task in dialogue systems, which aims to deal with numerous fast-emerging unacquainted intents without annotated training data.

Large-Scale Multi-Document Summarization with Information Extraction and Compression

no code implementations • 1 May 2022 • Ning Wang, Han Liu, Diego Klabjan

We develop an abstractive summarization framework independent of labeled data for multiple heterogeneous documents.

Wasserstein Distributionally Robust Optimization with Wasserstein Barycenters

no code implementations • 23 Mar 2022 • Tim Tsz-Kit Lau, Han Liu

On the other hand, in distributionally robust optimization, we seek data-driven decisions which perform well under the most adverse distribution from a nominal distribution constructed from data samples within a certain discrepancy of probability distributions.

Learning to Infer Belief Embedded Communication

no code implementations • 15 Mar 2022 • Guo Ye, Han Liu, Biswa Sengupta

In multi-agent collaboration problems with communication, an agent's ability to encode their intention and interpret other agents' strategies is critical for planning their future actions.

Switch Trajectory Transformer with Distributional Value Approximation for Multi-Task Reinforcement Learning

no code implementations • 14 Mar 2022 • Qinjie Lin, Han Liu, Biswa Sengupta

Our results also demonstrate the advantage of the switch transformer model for absorbing expert knowledge and the importance of value distribution in evaluating the trajectory.

Survival Prediction of Brain Cancer with Incomplete Radiology, Pathology, Genomics, and Demographic Data

no code implementations • 8 Mar 2022 • Can Cui, Han Liu, Quan Liu, Ruining Deng, Zuhayr Asad, Yaohong WangShilin Zhao, Haichun Yang, Bennett A. Landman, Yuankai Huo

Thus, there are still open questions on how to effectively predict brain cancer survival from the incomplete radiological, pathological, genomic, and demographic data (e. g., one or more modalities might not be collected for a patient).

ModDrop++: A Dynamic Filter Network with Intra-subject Co-training for Multiple Sclerosis Lesion Segmentation with Missing Modalities

1 code implementation • 7 Mar 2022 • Han Liu, Yubo Fan, Hao Li, Jiacheng Wang, Dewei Hu, Can Cui, Ho Hin Lee, Huahong Zhang, Ipek Oguz

Previously, a training strategy termed Modality Dropout (ModDrop) has been applied to MS lesion segmentation to achieve the state-of-the-art performance with missing modality.

Modeling and Validating Temporal Rules with Semantic Petri-Net for Digital Twins

no code implementations • 4 Mar 2022 • Han Liu, Xiaoyu Song, Ge Gao, Hehua Zhang, Yu-Shen Liu, Ming Gu

Semantic rule checking on RDFS/OWL data has been widely used in the construction industry.

Synthetic CT Skull Generation for Transcranial MR Imaging-Guided Focused Ultrasound Interventions with Conditional Adversarial Networks

1 code implementation • 21 Feb 2022 • Han Liu, Michelle K. Sigona, Thomas J. Manuel, Li Min Chen, Charles F. Caskey, Benoit M. Dawant

Transcranial MRI-guided focused ultrasound (TcMRgFUS) is a therapeutic ultrasound method that focuses sound through the skull to a small region noninvasively under MRI guidance.

A Multi-rater Comparative Study of Automatic Target Localization Methods for Epilepsy Deep Brain Stimulation Procedures

no code implementations • 26 Jan 2022 • Han Liu, Kathryn L. Holloway, Dario J. Englot, Benoit M. Dawant

Epilepsy is the fourth most common neurological disorder and affects people of all ages worldwide.

Unsupervised Domain Adaptation for Vestibular Schwannoma and Cochlea Segmentation via Semi-supervised Learning and Label Fusion

no code implementations • 25 Jan 2022 • Han Liu, Yubo Fan, Can Cui, Dingjie Su, Andrew McNeil, Benoit M. Dawant

Automatic methods to segment the vestibular schwannoma (VS) tumors and the cochlea from magnetic resonance imaging (MRI) are critical to VS treatment planning.

CrossMoDA 2021 challenge: Benchmark of Cross-Modality Domain Adaptation techniques for Vestibular Schwannoma and Cochlea Segmentation

3 code implementations • 8 Jan 2022 • Reuben Dorent, Aaron Kujawa, Marina Ivory, Spyridon Bakas, Nicola Rieke, Samuel Joutard, Ben Glocker, Jorge Cardoso, Marc Modat, Kayhan Batmanghelich, Arseniy Belkov, Maria Baldeon Calisto, Jae Won Choi, Benoit M. Dawant, Hexin Dong, Sergio Escalera, Yubo Fan, Lasse Hansen, Mattias P. Heinrich, Smriti Joshi, Victoriya Kashtanova, Hyeon Gyu Kim, Satoshi Kondo, Christian N. Kruse, Susana K. Lai-Yuen, Hao Li, Han Liu, Buntheng Ly, Ipek Oguz, Hyungseob Shin, Boris Shirokikh, Zixian Su, Guotai Wang, Jianghao Wu, Yanwu Xu, Kai Yao, Li Zhang, Sebastien Ourselin, Jonathan Shapey, Tom Vercauteren

The aim was to automatically perform unilateral VS and bilateral cochlea segmentation on hrT2 as provided in the testing set (N=137).

A Survey on Epistemic (Model) Uncertainty in Supervised Learning: Recent Advances and Applications

no code implementations • 3 Nov 2021 • Xinlei Zhou, Han Liu, Farhad Pourpanah, Tieyong Zeng, XiZhao Wang

This paper provides a comprehensive review of epistemic uncertainty learning techniques in supervised learning over the last five years.

An Explicit-Joint and Supervised-Contrastive Learning Framework for Few-Shot Intent Classification and Slot Filling

no code implementations • Findings (EMNLP) 2021 • Han Liu, Feng Zhang, Xiaotong Zhang, Siyang Zhao, Xianchao Zhang

Intent classification (IC) and slot filling (SF) are critical building blocks in task-oriented dialogue systems.

Learning Predictive, Online Approximations of Explanatory, Offline Algorithms

no code implementations • 29 Sep 2021 • Mattson Thieme, Ammar Gilani, Han Liu

In this work, we introduce a general methodology for approximating offline algorithms in online settings.

Reinforcement Learning under a Multi-agent Predictive State Representation Model: Method and Theory

no code implementations • ICLR 2022 • Zhi Zhang, Zhuoran Yang, Han Liu, Pratap Tokekar, Furong Huang

This paper proposes a new algorithm for learning the optimal policies under a novel multi-agent predictive state representation reinforcement learning model.

Cross-Modality Domain Adaptation for Vestibular Schwannoma and Cochlea Segmentation

no code implementations • 13 Sep 2021 • Han Liu, Yubo Fan, Can Cui, Dingjie Su, Andrew McNeil, Benoit M. Dawant

Automatic methods to segment the vestibular schwannoma (VS) tumors and the cochlea from magnetic resonance imaging (MRI) are critical to VS treatment planning.

Posterior Promoted GAN With Distribution Discriminator for Unsupervised Image Synthesis

no code implementations • CVPR 2021 • Xianchao Zhang, Ziyang Cheng, Xiaotong Zhang, Han Liu

In this paper, we propose a novel variant of GAN, Posterior Promoted GAN (P2GAN), which promotes generator with the real information in the posterior distribution produced by discriminator.

Review Polarity-wise Recommender

1 code implementation • 8 Jun 2021 • Han Liu, Yangyang Guo, Jianhua Yin, Zan Gao, Liqiang Nie

To be specific, in this model, positive and negative reviews are separately gathered and utilized to model the user-preferred and user-rejected aspects, respectively.

Trade the Event: Corporate Events Detection for News-Based Event-Driven Trading

1 code implementation • Findings (ACL) 2021 • Zhihan Zhou, Liqian Ma, Han Liu

In this paper, we introduce an event-driven trading strategy that predicts stock movements by detecting corporate events from news articles.

Cross-Dataset Collaborative Learning for Semantic Segmentation in Autonomous Driving

no code implementations • 21 Mar 2021 • Li Wang, Dong Li, Han Liu, Jinzhang Peng, Lu Tian, Yi Shan

Our goal is to train a unified model for improving the performance in each dataset by leveraging information from all the datasets.

BLOCKEYE: Hunting For DeFi Attacks on Blockchain

no code implementations • 4 Mar 2021 • Bin Wang, Han Liu, Chao Liu, Zhiqiang Yang, Qian Ren, Huixuan Zheng, Hong Lei

We applied BLOCKEYE in several popular DeFi projects and managed to discover potential security attacks that are unreported before.

Cryptography and Security Computers and Society

Converse, Focus and Guess -- Towards Multi-Document Driven Dialogue

1 code implementation • 4 Feb 2021 • Han Liu, Caixia Yuan, Xiaojie Wang, Yushu Yang, Huixing Jiang, Zhongyuan Wang

We propose a novel task, Multi-Document Driven Dialogue (MD3), in which an agent can guess the target document that the user is interested in by leading a dialogue.

Understanding the Effect of Out-of-distribution Examples and Interactive Explanations on Human-AI Decision Making

no code implementations • 13 Jan 2021 • Han Liu, Vivian Lai, Chenhao Tan

Although AI holds promise for improving human decision making in societally critical domains, it remains an open question how human-AI teams can reliably outperform AI alone and human alone in challenging prediction tasks (also known as complementary performance).

Morphology Matters: A Multilingual Language Modeling Analysis

1 code implementation • 11 Dec 2020 • Hyunji Hayley Park, Katherine J. Zhang, Coleman Haley, Kenneth Steimel, Han Liu, Lane Schwartz

We fill in missing typological data for several languages and consider corpus-based measures of morphological complexity in addition to expert-produced typological features.

Uncertainty Estimation in Medical Image Localization: Towards Robust Anterior Thalamus Targeting for Deep Brain Stimulation

no code implementations • 3 Nov 2020 • Han Liu, Can Cui, Dario J. Englot, Benoit M. Dawant

Atlas-based methods are the standard approaches for automatic targeting of the Anterior Nucleus of the Thalamus (ANT) for Deep Brain Stimulation (DBS), but these are known to lack robustness when anatomic differences between atlases and subjects are large.

Label-Wise Document Pre-Training for Multi-Label Text Classification

1 code implementation • 15 Aug 2020 • Han Liu, Caixia Yuan, Xiaojie Wang

A major challenge of multi-label text classification (MLTC) is to stimulatingly exploit possible label differences and label correlations.

Ranked #1 on

Multi-Label Text Classification

on AAPD

(Micro F1 metric)

Ranked #1 on

Multi-Label Text Classification

on AAPD

(Micro F1 metric)

Unknown Intent Detection Using Gaussian Mixture Model with an Application to Zero-shot Intent Classification

1 code implementation • ACL 2020 • Guangfeng Yan, Lu Fan, Qimai Li, Han Liu, Xiaotong Zhang, Xiao-Ming Wu, Albert Y. S. Lam

User intent classification plays a vital role in dialogue systems.

The flare Package for High Dimensional Linear Regression and Precision Matrix Estimation in R

no code implementations • 27 Jun 2020 • Xingguo Li, Tuo Zhao, Xiaoming Yuan, Han Liu

This paper describes an R package named flare, which implements a family of new high dimensional regression methods (LAD Lasso, SQRT Lasso, $\ell_q$ Lasso, and Dantzig selector) and their extensions to sparse precision matrix estimation (TIGER and CLIME).

Picasso: A Sparse Learning Library for High Dimensional Data Analysis in R and Python

1 code implementation • 27 Jun 2020 • Jason Ge, Xingguo Li, Haoming Jiang, Han Liu, Tong Zhang, Mengdi Wang, Tuo Zhao

We describe a new library named picasso, which implements a unified framework of pathwise coordinate optimization for a variety of sparse learning problems (e. g., sparse linear regression, sparse logistic regression, sparse Poisson regression and scaled sparse linear regression) combined with efficient active set selection strategies.

The huge Package for High-dimensional Undirected Graph Estimation in R

no code implementations • 26 Jun 2020 • Tuo Zhao, Han Liu, Kathryn Roeder, John Lafferty, Larry Wasserman

We describe an R package named huge which provides easy-to-use functions for estimating high dimensional undirected graphs from data.

Few-shot Slot Tagging with Collapsed Dependency Transfer and Label-enhanced Task-adaptive Projection Network

2 code implementations • ACL 2020 • Yutai Hou, Wanxiang Che, Yongkui Lai, Zhihan Zhou, Yijia Liu, Han Liu, Ting Liu

In this paper, we explore the slot tagging with only a few labeled support sentences (a. k. a.

A Deep Learning based Wearable Healthcare IoT Device for AI-enabled Hearing Assistance Automation

no code implementations • 16 May 2020 • Fraser Young, L. Zhang, Richard Jiang, Han Liu, Conor Wall

With the recent booming of artificial intelligence (AI), particularly deep learning techniques, digital healthcare is one of the prevalent areas that could gain benefits from AI-enabled functionality.

Neural Polysynthetic Language Modelling

no code implementations • 11 May 2020 • Lane Schwartz, Francis Tyers, Lori Levin, Christo Kirov, Patrick Littell, Chi-kiu Lo, Emily Prud'hommeaux, Hyunji Hayley Park, Kenneth Steimel, Rebecca Knowles, Jeffrey Micher, Lonny Strunk, Han Liu, Coleman Haley, Katherine J. Zhang, Robbie Jimmerson, Vasilisa Andriyanets, Aldrian Obaja Muis, Naoki Otani, Jong Hyuk Park, Zhisong Zhang

In the literature, languages like Finnish or Turkish are held up as extreme examples of complexity that challenge common modelling assumptions.

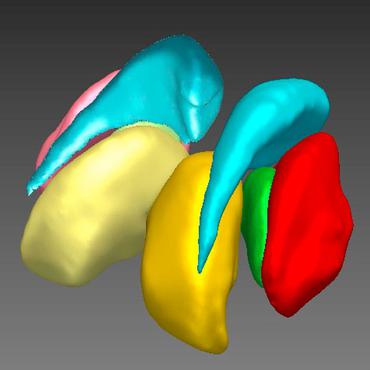

FAME: 3D Shape Generation via Functionality-Aware Model Evolution

1 code implementation • 9 May 2020 • Yanran Guan, Han Liu, Kun Liu, Kangxue Yin, Ruizhen Hu, Oliver van Kaick, Yan Zhang, Ersin Yumer, Nathan Carr, Radomir Mech, Hao Zhang

Our tool supports constrained modeling, allowing users to restrict or steer the model evolution with functionality labels.

Graphics

Scmhl5 at TRAC-2 Shared Task on Aggression Identification: Bert Based Ensemble Learning Approach

no code implementations • LREC 2020 • Han Liu, Pete Burnap, Wafa Alorainy, Matthew Williams

This paper presents a system developed during our participation (team name: scmhl5) in the TRAC-2 Shared Task on aggression identification.

EQL -- an extremely easy to learn knowledge graph query language, achieving highspeed and precise search

no code implementations • 19 Mar 2020 • Han Liu, Shantao Liu

EQL, also named as Extremely Simple Query Language, can be widely used in the field of knowledge graph, precise search, strong artificial intelligence, database, smart speaker , patent search and other fields.

"Why is 'Chicago' deceptive?" Towards Building Model-Driven Tutorials for Humans

no code implementations • 14 Jan 2020 • Vivian Lai, Han Liu, Chenhao Tan

To support human decision making with machine learning models, we often need to elucidate patterns embedded in the models that are unsalient, unknown, or counterintuitive to humans.

Automatic quality assessment for 2D fetal sonographic standard plane based on multi-task learning

no code implementations • 11 Dec 2019 • Hong Luo, Han Liu, Kejun Li, Bo Zhang

An essential criterion for FS image quality control is that all the essential anatomical structures in the section should appear full and remarkable with a clear boundary.

Reconstructing Capsule Networks for Zero-shot Intent Classification

1 code implementation • IJCNLP 2019 • Han Liu, Xiaotong Zhang, Lu Fan, Xu Fu, i, Qimai Li, Xiao-Ming Wu, Albert Y. S. Lam

With the burgeoning of conversational AI, existing systems are not capable of handling numerous fast-emerging intents, which motivates zero-shot intent classification.

Clustering Uncertain Data via Representative Possible Worlds with Consistency Learning

no code implementations • 27 Sep 2019 • Han Liu, Xianchao Zhang, Xiaotong Zhang, Qimai Li, Xiao-Ming Wu

However, there are two issues in existing possible world based algorithms: (1) They rely on all the possible worlds and treat them equally, but some marginal possible worlds may cause negative effects.

Dimensionwise Separable 2-D Graph Convolution for Unsupervised and Semi-Supervised Learning on Graphs

1 code implementation • 26 Sep 2019 • Qimai Li, Xiaotong Zhang, Han Liu, Quanyu Dai, Xiao-Ming Wu

Graph convolutional neural networks (GCN) have been the model of choice for graph representation learning, which is mainly due to the effective design of graph convolution that computes the representation of a node by aggregating those of its neighbors.

Attributed Graph Learning with 2-D Graph Convolution

no code implementations • 25 Sep 2019 • Qimai Li, Xiaotong Zhang, Han Liu, Xiao-Ming Wu

Graph convolutional neural networks have demonstrated promising performance in attributed graph learning, thanks to the use of graph convolution that effectively combines graph structures and node features for learning node representations.

AdvCodec: Towards A Unified Framework for Adversarial Text Generation

no code implementations • 25 Sep 2019 • Boxin Wang, Hengzhi Pei, Han Liu, Bo Li

In particular, we propose a tree based autoencoder to encode discrete text data into continuous vector space, upon which we optimize the adversarial perturbation.

Scalable Differentially Private Data Generation via Private Aggregation of Teacher Ensembles

no code implementations • 25 Sep 2019 • Yunhui Long, Suxin Lin, Zhuolin Yang, Carl A. Gunter, Han Liu, Bo Li

We present a novel approach named G-PATE for training differentially private data generator.

Fast Low-rank Metric Learning for Large-scale and High-dimensional Data

1 code implementation • NeurIPS 2019 • Han Liu, Zhizhong Han, Yu-Shen Liu, Ming Gu

Low-rank metric learning aims to learn better discrimination of data subject to low-rank constraints.

More Supervision, Less Computation: Statistical-Computational Tradeoffs in Weakly Supervised Learning

no code implementations • NeurIPS 2016 • Xinyang Yi, Zhaoran Wang, Zhuoran Yang, Constantine Caramanis, Han Liu

We consider the weakly supervised binary classification problem where the labels are randomly flipped with probability $1- {\alpha}$.

Few-Shot Sequence Labeling with Label Dependency Transfer and Pair-wise Embedding

no code implementations • 20 Jun 2019 • Yutai Hou, Zhihan Zhou, Yijia Liu, Ning Wang, Wanxiang Che, Han Liu, Ting Liu

It calculates emission score with similarity based methods and obtains transition score with a specially designed transfer mechanism.

Attributed Graph Clustering via Adaptive Graph Convolution

1 code implementation • 4 Jun 2019 • Xiaotong Zhang, Han Liu, Qimai Li, Xiao-Ming Wu

Attributed graph clustering is challenging as it requires joint modelling of graph structures and node attributes.

Ranked #3 on

Graph Clustering

on Cora

Ranked #3 on

Graph Clustering

on Cora

GLAD: Learning Sparse Graph Recovery

1 code implementation • ICLR 2020 • Harsh Shrivastava, Xinshi Chen, Binghong Chen, Guanghui Lan, Srinvas Aluru, Han Liu, Le Song

Recently, there is a surge of interest to learn algorithms directly based on data, and in this case, learn to map empirical covariance to the sparse precision matrix.

Estimating and Inferring the Maximum Degree of Stimulus-Locked Time-Varying Brain Connectivity Networks

no code implementations • 28 May 2019 • Kean Ming Tan, Junwei Lu, Tong Zhang, Han Liu

To address this issue, neuroscientists have been measuring brain activity under natural viewing experiments in which the subjects are given continuous stimuli, such as watching a movie or listening to a story.

Learning to Plan in High Dimensions via Neural Exploration-Exploitation Trees

1 code implementation • ICLR 2020 • Binghong Chen, Bo Dai, Qinjie Lin, Guo Ye, Han Liu, Le Song

We propose a meta path planning algorithm named \emph{Neural Exploration-Exploitation Trees~(NEXT)} for learning from prior experience for solving new path planning problems in high dimensional continuous state and action spaces.

Label Efficient Semi-Supervised Learning via Graph Filtering

1 code implementation • CVPR 2019 • Qimai Li, Xiao-Ming Wu, Han Liu, Xiaotong Zhang, Zhichao Guan

However, existing graph-based methods either are limited in their ability to jointly model graph structures and data features, such as the classical label propagation methods, or require a considerable amount of labeled data for training and validation due to high model complexity, such as the recent neural-network-based methods.

Finite-Sample Analysis For Decentralized Batch Multi-Agent Reinforcement Learning With Networked Agents

no code implementations • 6 Dec 2018 • Kaiqing Zhang, Zhuoran Yang, Han Liu, Tong Zhang, Tamer Başar

This work appears to be the first finite-sample analysis for batch MARL, a step towards rigorous theoretical understanding of general MARL algorithms in the finite-sample regime.

Multi-agent Reinforcement Learning

Multi-agent Reinforcement Learning

reinforcement-learning

+1

reinforcement-learning

+1

Exponentially Weighted Imitation Learning for Batched Historical Data

1 code implementation • NeurIPS 2018 • Qing Wang, Jiechao Xiong, Lei Han, Peng Sun, Han Liu, Tong Zhang

We consider deep policy learning with only batched historical trajectories.

Sketching Method for Large Scale Combinatorial Inference

no code implementations • NeurIPS 2018 • Wei Sun, Junwei Lu, Han Liu

In order to test the hypotheses on their topological structures, we propose two adjacency matrix sketching frameworks: neighborhood sketching and subgraph sketching.

Performance assessment of the deep learning technologies in grading glaucoma severity

no code implementations • 31 Oct 2018 • Yi Zhen, Lei Wang, Han Liu, Jian Zhang, Jiantao Pu

Among these CNNs, the DenseNet had the highest classification accuracy (i. e., 75. 50%) based on pre-trained weights when using global ROIs, as compared to 65. 50% when using local ROIs.

SDFN: Segmentation-based Deep Fusion Network for Thoracic Disease Classification in Chest X-ray Images

no code implementations • 30 Oct 2018 • Han Liu, Lei Wang, Yandong Nan, Faguang Jin, Qi. Wang, Jiantao Pu

Two CNN-based classification models were then used as feature extractors to obtain the discriminative features of the entire CXR images and the cropped lung region images.

Super-pixel cloud detection using Hierarchical Fusion CNN

no code implementations • 19 Oct 2018 • Han Liu, Dan Zeng, Qi Tian

Secondly, super-pixel level database is used to train our cloud detection models based on CNN and deep forest.

Parametrized Deep Q-Networks Learning: Reinforcement Learning with Discrete-Continuous Hybrid Action Space

5 code implementations • 10 Oct 2018 • Jiechao Xiong, Qing Wang, Zhuoran Yang, Peng Sun, Lei Han, Yang Zheng, Haobo Fu, Tong Zhang, Ji Liu, Han Liu

Most existing deep reinforcement learning (DRL) frameworks consider either discrete action space or continuous action space solely.

Fully Implicit Online Learning

no code implementations • 25 Sep 2018 • Chaobing Song, Ji Liu, Han Liu, Yong Jiang, Tong Zhang

Regularized online learning is widely used in machine learning applications.

High-Temperature Structure Detection in Ferromagnets

no code implementations • 21 Sep 2018 • Yuan Cao, Matey Neykov, Han Liu

The goal is to distinguish whether the underlying graph is empty, i. e., the model consists of independent Rademacher variables, versus the alternative that the underlying graph contains a subgraph of a certain structure.

TStarBots: Defeating the Cheating Level Builtin AI in StarCraft II in the Full Game

3 code implementations • 19 Sep 2018 • Peng Sun, Xinghai Sun, Lei Han, Jiechao Xiong, Qing Wang, Bo Li, Yang Zheng, Ji Liu, Yongsheng Liu, Han Liu, Tong Zhang

Both TStarBot1 and TStarBot2 are able to defeat the built-in AI agents from level 1 to level 10 in a full game (1v1 Zerg-vs-Zerg game on the AbyssalReef map), noting that level 8, level 9, and level 10 are cheating agents with unfair advantages such as full vision on the whole map and resource harvest boosting.

A convex formulation for high-dimensional sparse sliced inverse regression

no code implementations • 17 Sep 2018 • Kean Ming Tan, Zhaoran Wang, Tong Zhang, Han Liu, R. Dennis Cook

Sliced inverse regression is a popular tool for sufficient dimension reduction, which replaces covariates with a minimal set of their linear combinations without loss of information on the conditional distribution of the response given the covariates.

Factorized Q-Learning for Large-Scale Multi-Agent Systems

no code implementations • 11 Sep 2018 • Yong Chen, Ming Zhou, Ying Wen, Yaodong Yang, Yufeng Su, Wei-Nan Zhang, Dell Zhang, Jun Wang, Han Liu

Deep Q-learning has achieved a significant success in single-agent decision making tasks.

Multiagent Systems

Online ICA: Understanding Global Dynamics of Nonconvex Optimization via Diffusion Processes

no code implementations • NeurIPS 2016 • Chris Junchi Li, Zhaoran Wang, Han Liu

Despite the empirical success of nonconvex statistical optimization methods, their global dynamics, especially convergence to the desirable local minima, remain less well understood in theory.

Diffusion Approximations for Online Principal Component Estimation and Global Convergence

no code implementations • NeurIPS 2017 • Chris Junchi Li, Mengdi Wang, Han Liu, Tong Zhang

In this paper, we propose to adopt the diffusion approximation tools to study the dynamics of Oja's iteration which is an online stochastic gradient descent method for the principal component analysis.

Curse of Heterogeneity: Computational Barriers in Sparse Mixture Models and Phase Retrieval

no code implementations • 21 Aug 2018 • Jianqing Fan, Han Liu, Zhaoran Wang, Zhuoran Yang

We study the fundamental tradeoffs between statistical accuracy and computational tractability in the analysis of high dimensional heterogeneous data.

Graphical Nonconvex Optimization via an Adaptive Convex Relaxation

no code implementations • ICML 2018 • Qiang Sun, Kean Ming Tan, Han Liu, Tong Zhang

Our proposal is computationally tractable and produces an estimator that achieves the oracle rate of convergence.

The Edge Density Barrier: Computational-Statistical Tradeoffs in Combinatorial Inference

no code implementations • ICML 2018 • Hao Lu, Yuan Cao, Zhuoran Yang, Junwei Lu, Han Liu, Zhaoran Wang

We study the hypothesis testing problem of inferring the existence of combinatorial structures in undirected graphical models.

Marginal Policy Gradients: A Unified Family of Estimators for Bounded Action Spaces with Applications

1 code implementation • ICLR 2019 • Carson Eisenach, Haichuan Yang, Ji Liu, Han Liu

In the former, an agent learns a policy over $\mathbb{R}^d$ and in the latter, over a discrete set of actions each of which is parametrized by a continuous parameter.

Efficient, Certifiably Optimal Clustering with Applications to Latent Variable Graphical Models

2 code implementations • 1 Jun 2018 • Carson Eisenach, Han Liu

Compared to the naive interior point method, our method reduces the computational complexity of solving the SDP from $\tilde{O}(d^7\log\epsilon^{-1})$ to $\tilde{O}(d^{6}K^{-2}\epsilon^{-1})$ arithmetic operations for an $\epsilon$-optimal solution.

Feedback-Based Tree Search for Reinforcement Learning

no code implementations • ICML 2018 • Daniel R. Jiang, Emmanuel Ekwedike, Han Liu

Inspired by recent successes of Monte-Carlo tree search (MCTS) in a number of artificial intelligence (AI) application domains, we propose a model-based reinforcement learning (RL) technique that iteratively applies MCTS on batches of small, finite-horizon versions of the original infinite-horizon Markov decision process.

Model-based Reinforcement Learning

Model-based Reinforcement Learning

reinforcement-learning

+1

reinforcement-learning

+1

Discrete Factorization Machines for Fast Feature-based Recommendation

1 code implementation • 6 May 2018 • Han Liu, Xiangnan He, Fuli Feng, Liqiang Nie, Rui Liu, Hanwang Zhang

In this paper, we develop a generic feature-based recommendation model, called Discrete Factorization Machine (DFM), for fast and accurate recommendation.

Fully Decentralized Multi-Agent Reinforcement Learning with Networked Agents

5 code implementations • ICML 2018 • Kaiqing Zhang, Zhuoran Yang, Han Liu, Tong Zhang, Tamer Başar

To this end, we propose two decentralized actor-critic algorithms with function approximation, which are applicable to large-scale MARL problems where both the number of states and the number of agents are massively large.

Multi-agent Reinforcement Learning

Multi-agent Reinforcement Learning

reinforcement-learning

+1

reinforcement-learning

+1

The Enemy Among Us: Detecting Hate Speech with Threats Based 'Othering' Language Embeddings

no code implementations • 23 Jan 2018 • Wafa Alorainy, Pete Burnap, Han Liu, Matthew Williams

Offensive or antagonistic language targeted at individuals and social groups based on their personal characteristics (also known as cyber hate speech or cyberhate) has been frequently posted and widely circulated viathe World Wide Web.

PARAMETRIZED DEEP Q-NETWORKS LEARNING: PLAYING ONLINE BATTLE ARENA WITH DISCRETE-CONTINUOUS HYBRID ACTION SPACE

1 code implementation • ICLR 2018 • Jiechao Xiong, Qing Wang, Zhuoran Yang, Peng Sun, Yang Zheng, Lei Han, Haobo Fu, Xiangru Lian, Carson Eisenach, Haichuan Yang, Emmanuel Ekwedike, Bei Peng, Haoyue Gao, Tong Zhang, Ji Liu, Han Liu

Most existing deep reinforcement learning (DRL) frameworks consider action spaces that are either discrete or continuous space.

Estimating High-dimensional Non-Gaussian Multiple Index Models via Stein’s Lemma

no code implementations • NeurIPS 2017 • Zhuoran Yang, Krishnakumar Balasubramanian, Princeton Zhaoran Wang, Han Liu

We consider estimating the parametric components of semiparametric multi-index models in high dimensions.

Parametric Simplex Method for Sparse Learning

no code implementations • NeurIPS 2017 • Haotian Pang, Han Liu, Robert J. Vanderbei, Tuo Zhao

High dimensional sparse learning has imposed a great computational challenge to large scale data analysis.

On Stein's Identity and Near-Optimal Estimation in High-dimensional Index Models

no code implementations • 26 Sep 2017 • Zhuoran Yang, Krishnakumar Balasubramanian, Han Liu

We consider estimating the parametric components of semi-parametric multiple index models in a high-dimensional and non-Gaussian setting.

Inter-Subject Analysis: Inferring Sparse Interactions with Dense Intra-Graphs

no code implementations • 20 Sep 2017 • Cong Ma, Junwei Lu, Han Liu

Our framework is based on the Gaussian graphical models, under which ISA can be converted to the problem of estimation and inference of the inter-subject precision matrix.

Property Testing in High Dimensional Ising models

no code implementations • 20 Sep 2017 • Matey Neykov, Han Liu

In terms of methodological development, we propose two types of correlation based tests: computationally efficient screening for ferromagnets, and score type tests for general models, including a fast cycle presence test.

High-dimensional Non-Gaussian Single Index Models via Thresholded Score Function Estimation

no code implementations • ICML 2017 • Zhuoran Yang, Krishnakumar Balasubramanian, Han Liu

We consider estimating the parametric component of single index models in high dimensions.

Adaptive Inferential Method for Monotone Graph Invariants

no code implementations • 28 Jul 2017 • Junwei Lu, Matey Neykov, Han Liu

In this paper, we propose a new inferential framework for testing nested multiple hypotheses and constructing confidence intervals of the unknown graph invariants under undirected graphical models.

CANE: Context-Aware Network Embedding for Relation Modeling

1 code implementation • ACL 2017 • Cunchao Tu, Han Liu, Zhiyuan Liu, Maosong Sun

Network embedding (NE) is playing a critical role in network analysis, due to its ability to represent vertices with efficient low-dimensional embedding vectors.

Graphical Nonconvex Optimization for Optimal Estimation in Gaussian Graphical Models

no code implementations • 4 Jun 2017 • Qiang Sun, Kean Ming Tan, Han Liu, Tong Zhang

Our proposal is computationally tractable and produces an estimator that achieves the oracle rate of convergence.

Continual Learning in Generative Adversarial Nets

no code implementations • 23 May 2017 • Ari Seff, Alex Beatson, Daniel Suo, Han Liu

Developments in deep generative models have allowed for tractable learning of high-dimensional data distributions.

Homotopy Parametric Simplex Method for Sparse Learning

no code implementations • 4 Apr 2017 • Haotian Pang, Robert Vanderbei, Han Liu, Tuo Zhao

High dimensional sparse learning has imposed a great computational challenge to large scale data analysis.

Symmetry, Saddle Points, and Global Optimization Landscape of Nonconvex Matrix Factorization

no code implementations • 29 Dec 2016 • Xingguo Li, Junwei Lu, Raman Arora, Jarvis Haupt, Han Liu, Zhaoran Wang, Tuo Zhao

We propose a general theory for studying the \xl{landscape} of nonconvex \xl{optimization} with underlying symmetric structures \tz{for a class of machine learning problems (e. g., low-rank matrix factorization, phase retrieval, and deep linear neural networks)}.

Blind Attacks on Machine Learners

no code implementations • NeurIPS 2016 • Alex Beatson, Zhaoran Wang, Han Liu

We study the potential of a “blind attacker” to provably limit a learner’s performance by data injection attack without observing the learner’s training set or any parameter of the distribution from which it is drawn.

Agnostic Estimation for Misspecified Phase Retrieval Models

no code implementations • NeurIPS 2016 • Matey Neykov, Zhaoran Wang, Han Liu

The goal of noisy high-dimensional phase retrieval is to estimate an $s$-sparse parameter $\boldsymbol{\beta}^*\in \mathbb{R}^d$ from $n$ realizations of the model $Y = (\boldsymbol{X}^{\top} \boldsymbol{\beta}^*)^2 + \varepsilon$.

Max-Norm Optimization for Robust Matrix Recovery

no code implementations • 24 Sep 2016 • Ethan X. Fang, Han Liu, Kim-Chuan Toh, Wen-Xin Zhou

This paper studies the matrix completion problem under arbitrary sampling schemes.

Tensor Graphical Model: Non-convex Optimization and Statistical Inference

no code implementations • 15 Sep 2016 • Xiang Lyu, Will Wei Sun, Zhaoran Wang, Han Liu, Jian Yang, Guang Cheng

We consider the estimation and inference of graphical models that characterize the dependency structure of high-dimensional tensor-valued data.

Combinatorial Inference for Graphical Models

no code implementations • 10 Aug 2016 • Matey Neykov, Junwei Lu, Han Liu

We propose a new family of combinatorial inference problems for graphical models.

On Faster Convergence of Cyclic Block Coordinate Descent-type Methods for Strongly Convex Minimization

no code implementations • 10 Jul 2016 • Xingguo Li, Tuo Zhao, Raman Arora, Han Liu, Mingyi Hong

In particular, we first show that for a family of quadratic minimization problems, the iteration complexity $\mathcal{O}(\log^2(p)\cdot\log(1/\epsilon))$ of the CBCD-type methods matches that of the GD methods in term of dependency on $p$, up to a $\log^2 p$ factor.

On Fast Convergence of Proximal Algorithms for SQRT-Lasso Optimization: Don't Worry About Its Nonsmooth Loss Function

no code implementations • 25 May 2016 • Xingguo Li, Haoming Jiang, Jarvis Haupt, Raman Arora, Han Liu, Mingyi Hong, Tuo Zhao

Many machine learning techniques sacrifice convenient computational structures to gain estimation robustness and modeling flexibility.

Nonconvex Sparse Learning via Stochastic Optimization with Progressive Variance Reduction

no code implementations • 9 May 2016 • Xingguo Li, Raman Arora, Han Liu, Jarvis Haupt, Tuo Zhao

We propose a stochastic variance reduced optimization algorithm for solving sparse learning problems with cardinality constraints.

Sparse Generalized Eigenvalue Problem: Optimal Statistical Rates via Truncated Rayleigh Flow

no code implementations • 29 Apr 2016 • Kean Ming Tan, Zhaoran Wang, Han Liu, Tong Zhang

Sparse generalized eigenvalue problem (GEP) plays a pivotal role in a large family of high-dimensional statistical models, including sparse Fisher's discriminant analysis, canonical correlation analysis, and sufficient dimension reduction.

Near-Optimal Stochastic Approximation for Online Principal Component Estimation

no code implementations • 16 Mar 2016 • Chris Junchi Li, Mengdi Wang, Han Liu, Tong Zhang

We prove for the first time a nearly optimal finite-sample error bound for the online PCA algorithm.

Sharp Computational-Statistical Phase Transitions via Oracle Computational Model

no code implementations • 30 Dec 2015 • Zhaoran Wang, Quanquan Gu, Han Liu

Based upon an oracle model of computation, which captures the interactions between algorithms and data, we establish a general lower bound that explicitly connects the minimum testing risk under computational budget constraints with the intrinsic probabilistic and combinatorial structures of statistical problems.

Post-Regularization Inference for Time-Varying Nonparanormal Graphical Models

no code implementations • 28 Dec 2015 • Junwei Lu, Mladen Kolar, Han Liu

The testing procedures are based on a high dimensional, debiasing-free moment estimator, which uses a novel kernel smoothed Kendall's tau correlation matrix as an input statistic.

Non-convex Statistical Optimization for Sparse Tensor Graphical Model

no code implementations • NeurIPS 2015 • Wei Sun, Zhaoran Wang, Han Liu, Guang Cheng

We consider the estimation of sparse graphical models that characterize the dependency structure of high-dimensional tensor-valued data.

High Dimensional EM Algorithm: Statistical Optimization and Asymptotic Normality

no code implementations • NeurIPS 2015 • Zhaoran Wang, Quanquan Gu, Yang Ning, Han Liu

We provide a general theory of the expectation-maximization (EM) algorithm for inferring high dimensional latent variable models.

Local Smoothness in Variance Reduced Optimization

no code implementations • NeurIPS 2015 • Daniel Vainsencher, Han Liu, Tong Zhang

Abstract We propose a family of non-uniform sampling strategies to provably speed up a class of stochastic optimization algorithms with linear convergence including Stochastic Variance Reduced Gradient (SVRG) and Stochastic Dual Coordinate Ascent (SDCA).

A Nonconvex Optimization Framework for Low Rank Matrix Estimation

no code implementations • NeurIPS 2015 • Tuo Zhao, Zhaoran Wang, Han Liu

We study the estimation of low rank matrices via nonconvex optimization.

Robust Portfolio Optimization

no code implementations • NeurIPS 2015 • Huitong Qiu, Fang Han, Han Liu, Brian Caffo

We propose a robust portfolio optimization approach based on quantile statistics.

Sparse Nonlinear Regression: Parameter Estimation and Asymptotic Inference

no code implementations • 14 Nov 2015 • Zhuoran Yang, Zhaoran Wang, Han Liu, Yonina C. Eldar, Tong Zhang

To recover $\beta^*$, we propose an $\ell_1$-regularized least-squares estimator.

A Unified Theory of Confidence Regions and Testing for High Dimensional Estimating Equations

no code implementations • 30 Oct 2015 • Matey Neykov, Yang Ning, Jun S. Liu, Han Liu

Our main theoretical contribution is to establish a unified Z-estimation theory of confidence regions for high dimensional problems.

Optimal linear estimation under unknown nonlinear transform

no code implementations • NeurIPS 2015 • Xinyang Yi, Zhaoran Wang, Constantine Caramanis, Han Liu

This model is known as the single-index model in statistics, and, among other things, it represents a significant generalization of one-bit compressed sensing.

Graphical Fermat's Principle and Triangle-Free Graph Estimation

no code implementations • 23 Apr 2015 • Junwei Lu, Han Liu

We consider the problem of estimating undirected triangle-free graphs of high dimensional distributions.

The Knowledge Gradient Policy Using A Sparse Additive Belief Model

no code implementations • 18 Mar 2015 • Yan Li, Han Liu, Warren Powell

We propose a sequential learning policy for noisy discrete global optimization and ranking and selection (R\&S) problems with high dimensional sparse belief functions, where there are hundreds or even thousands of features, but only a small portion of these features contain explanatory power.

Kernel Meets Sieve: Post-Regularization Confidence Bands for Sparse Additive Model

no code implementations • 10 Mar 2015 • Junwei Lu, Mladen Kolar, Han Liu

We develop a novel procedure for constructing confidence bands for components of a sparse additive model.

Statistical Limits of Convex Relaxations

no code implementations • 4 Mar 2015 • Zhaoran Wang, Quanquan Gu, Han Liu

Many high dimensional sparse learning problems are formulated as nonconvex optimization.

An Extreme-Value Approach for Testing the Equality of Large U-Statistic Based Correlation Matrices

no code implementations • 11 Feb 2015 • Cheng Zhou, Fang Han, Xinsheng Zhang, Han Liu

Theoretically, we develop a theory for testing the equality of U-statistic based correlation matrices.

Local and Global Inference for High Dimensional Nonparanormal Graphical Models

no code implementations • 9 Feb 2015 • Quanquan Gu, Yuan Cao, Yang Ning, Han Liu

Due to the presence of unknown marginal transformations, we propose a pseudo likelihood based inferential approach.

Provable Sparse Tensor Decomposition

no code implementations • 5 Feb 2015 • Will Wei Sun, Junwei Lu, Han Liu, Guang Cheng

We propose a novel sparse tensor decomposition method, namely Tensor Truncated Power (TTP) method, that incorporates variable selection into the estimation of decomposition components.

A General Theory of Hypothesis Tests and Confidence Regions for Sparse High Dimensional Models

no code implementations • 30 Dec 2014 • Yang Ning, Han Liu

Specifically, we propose a decorrelated score function to handle the impact of high dimensional nuisance parameters.

On Semiparametric Exponential Family Graphical Models

no code implementations • 30 Dec 2014 • Zhuoran Yang, Yang Ning, Han Liu

We propose a new class of semiparametric exponential family graphical models for the analysis of high dimensional mixed data.

High Dimensional Expectation-Maximization Algorithm: Statistical Optimization and Asymptotic Normality

no code implementations • 30 Dec 2014 • Zhaoran Wang, Quanquan Gu, Yang Ning, Han Liu

We provide a general theory of the expectation-maximization (EM) algorithm for inferring high dimensional latent variable models.

A General Framework for Robust Testing and Confidence Regions in High-Dimensional Quantile Regression

no code implementations • 30 Dec 2014 • Tianqi Zhao, Mladen Kolar, Han Liu

Our de-biasing procedure does not require solving the $L_1$-penalized composite quantile regression.

Pathwise Coordinate Optimization for Sparse Learning: Algorithm and Theory

no code implementations • 23 Dec 2014 • Tuo Zhao, Han Liu, Tong Zhang

This is the first result on the computational and statistical guarantees of the pathwise coordinate optimization framework in high dimensions.

Testing and Confidence Intervals for High Dimensional Proportional Hazards Model

no code implementations • 16 Dec 2014 • Ethan X. Fang, Yang Ning, Han Liu

This paper proposes a decorrelation-based approach to test hypotheses and construct confidence intervals for the low dimensional component of high dimensional proportional hazards models.

A Likelihood Ratio Framework for High Dimensional Semiparametric Regression

no code implementations • 6 Dec 2014 • Yang Ning, Tianqi Zhao, Han Liu

(i) We develop a regularized statistical chromatography approach to infer the parameter of interest under the proposed semiparametric generalized linear model without the need of estimating the unknown base measure function.

Mode Estimation for High Dimensional Discrete Tree Graphical Models

no code implementations • NeurIPS 2014 • Chao Chen, Han Liu, Dimitris Metaxas, Tianqi Zhao

Though the mode finding problem is generally intractable in high dimensions, this paper unveils that, if the distribution can be approximated well by a tree graphical model, mode characterization is significantly easier.

Multivariate Regression with Calibration

no code implementations • NeurIPS 2014 • Han Liu, Lie Wang, Tuo Zhao

We propose a new method named calibrated multivariate regression (CMR) for fitting high dimensional multivariate regression models.

Accelerated Mini-batch Randomized Block Coordinate Descent Method

no code implementations • NeurIPS 2014 • Tuo Zhao, Mo Yu, Yiming Wang, Raman Arora, Han Liu

When the regularization function is block separable, we can solve the minimization problems in a randomized block coordinate descent (RBCD) manner.

Tighten after Relax: Minimax-Optimal Sparse PCA in Polynomial Time

no code implementations • NeurIPS 2014 • Zhaoran Wang, Huanran Lu, Han Liu

In this paper, we propose a two-stage sparse PCA procedure that attains the optimal principal subspace estimator in polynomial time.

Stochastic Compositional Gradient Descent: Algorithms for Minimizing Compositions of Expected-Value Functions

no code implementations • 14 Nov 2014 • Mengdi Wang, Ethan X. Fang, Han Liu

For smooth convex problems, the SCGD can be accelerated to converge at a rate of $O(k^{-2/7})$ in the general case and $O(k^{-4/5})$ in the strongly convex case.

Nonconvex Statistical Optimization: Minimax-Optimal Sparse PCA in Polynomial Time

no code implementations • 22 Aug 2014 • Zhaoran Wang, Huanran Lu, Han Liu

To optimally estimate sparse principal subspaces, we propose a two-stage computational framework named "tighten after relax": Within the 'relax' stage, we approximately solve a convex relaxation of sparse PCA with early stopping to obtain a desired initial estimator; For the 'tighten' stage, we propose a novel algorithm called sparse orthogonal iteration pursuit (SOAP), which iteratively refines the initial estimator by directly solving the underlying nonconvex problem.

High Dimensional Semiparametric Latent Graphical Model for Mixed Data

1 code implementation • 29 Apr 2014 • Jianqing Fan, Han Liu, Yang Ning, Hui Zou

Theoretically, the proposed methods achieve the same rates of convergence for both precision matrix estimation and eigenvector estimation, as if the latent variables were observed.

High Dimensional Semiparametric Scale-Invariant Principal Component Analysis

no code implementations • 18 Feb 2014 • Fang Han, Han Liu

We propose a new high dimensional semiparametric principal component analysis (PCA) method, named Copula Component Analysis (COCA).

Nonparametric Latent Tree Graphical Models: Inference, Estimation, and Structure Learning

no code implementations • 16 Jan 2014 • Le Song, Han Liu, Ankur Parikh, Eric Xing

Tree structured graphical models are powerful at expressing long range or hierarchical dependency among many variables, and have been widely applied in different areas of computer science and statistics.

Optimization for Compressed Sensing: the Simplex Method and Kronecker Sparsification

no code implementations • 16 Dec 2013 • Robert Vanderbei, Han Liu, Lie Wang, Kevin Lin

For the first approach, we note that the zero vector can be taken as the initial basic (infeasible) solution for the linear programming problem and therefore, if the true signal is very sparse, some variants of the simplex method can be expected to take only a small number of pivots to arrive at a solution.

Robust Sparse Principal Component Regression under the High Dimensional Elliptical Model

no code implementations • NeurIPS 2013 • Fang Han, Han Liu

In this paper we focus on the principal component regression and its application to high dimension non-Gaussian data.

Sparse Inverse Covariance Estimation with Calibration

no code implementations • NeurIPS 2013 • Tuo Zhao, Han Liu

We propose a semiparametric procedure for estimating high dimensional sparse inverse covariance matrix.

Joint Estimation of Multiple Graphical Models from High Dimensional Time Series

no code implementations • 1 Nov 2013 • Huitong Qiu, Fang Han, Han Liu, Brian Caffo