Test-Time Training with Masked Autoencoders

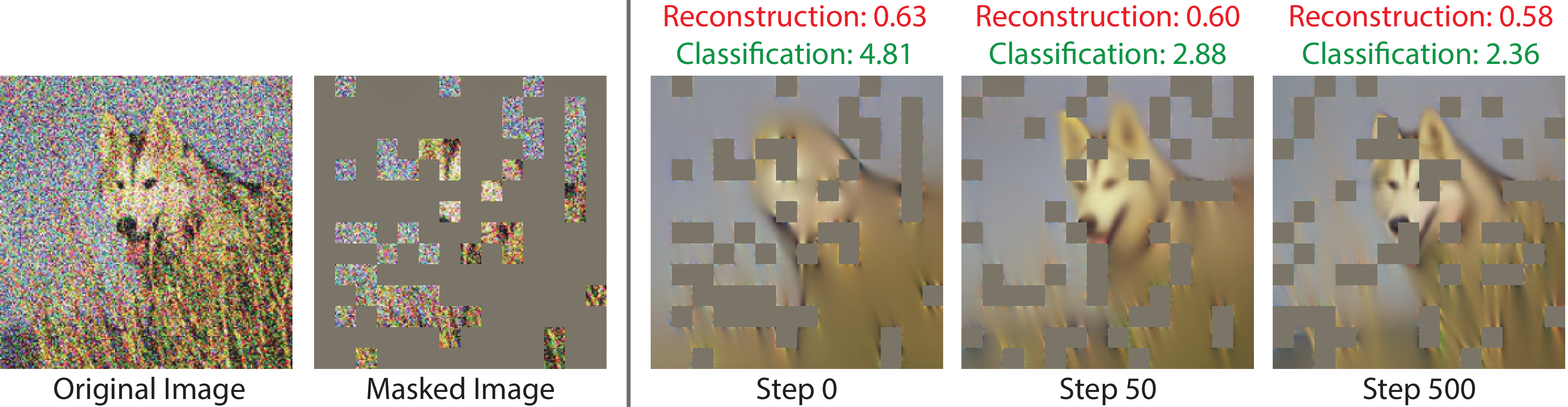

Test-time training adapts to a new test distribution on the fly by optimizing a model for each test input using self-supervision. In this paper, we use masked autoencoders for this one-sample learning problem. Empirically, our simple method improves generalization on many visual benchmarks for distribution shifts. Theoretically, we characterize this improvement in terms of the bias-variance trade-off.

PDF AbstractTasks

Datasets

Results from the Paper

Submit

results from this paper

to get state-of-the-art GitHub badges and help the

community compare results to other papers.

ImageNet

ImageNet

ImageNet-C

ImageNet-C

ImageNet-R

ImageNet-R

ImageNet-A

ImageNet-A