Learning to Modulate Random Weights: Neuromodulation-inspired Neural Networks For Efficient Continual Learning

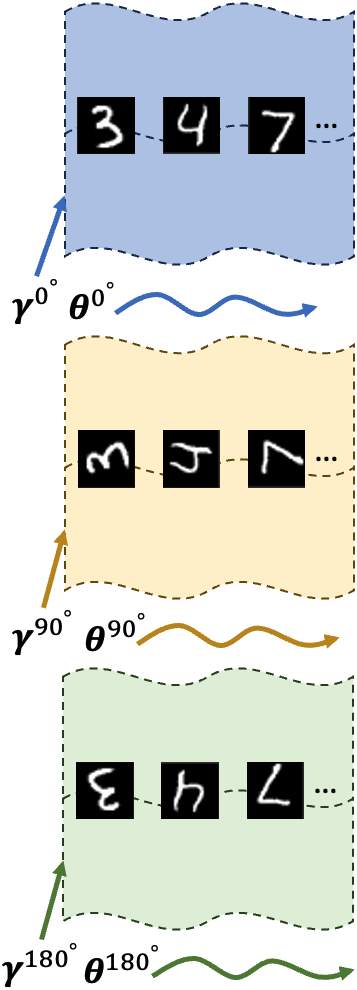

Existing Continual Learning (CL) approaches have focused on addressing catastrophic forgetting by leveraging regularization methods, replay buffers, and task-specific components. However, realistic CL solutions must be shaped not only by metrics of catastrophic forgetting but also by computational efficiency and running time. Here, we introduce a novel neural network architecture inspired by neuromodulation in biological nervous systems to economically and efficiently address catastrophic forgetting and provide new avenues for interpreting learned representations. Neuromodulation is a biological mechanism that has received limited attention in machine learning; it dynamically controls and fine tunes synaptic dynamics in real time to track the demands of different behavioral contexts. Inspired by this, our proposed architecture learns a relatively small set of parameters per task context that \emph{neuromodulates} the activity of unchanging, randomized weights that transform the input. We show that this approach has strong learning performance per task despite the very small number of learnable parameters. Furthermore, because context vectors are so compact, multiple networks can be stored concurrently with no interference and little spatial footprint, thus completely eliminating catastrophic forgetting and accelerating the training process.

PDF Abstract

<h2>oi</h2>

<h2>oi</h2>

CIFAR-100

CIFAR-100