AttT2M: Text-Driven Human Motion Generation with Multi-Perspective Attention Mechanism

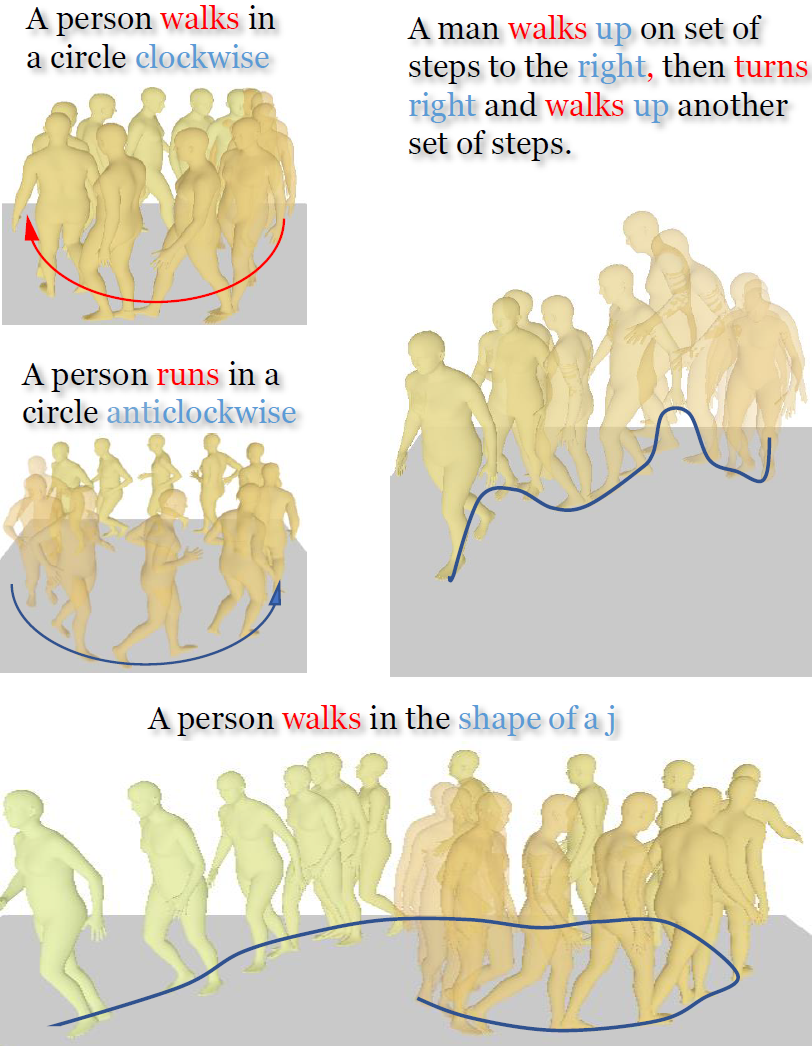

Generating 3D human motion based on textual descriptions has been a research focus in recent years. It requires the generated motion to be diverse, natural, and conform to the textual description. Due to the complex spatio-temporal nature of human motion and the difficulty in learning the cross-modal relationship between text and motion, text-driven motion generation is still a challenging problem. To address these issues, we propose \textbf{AttT2M}, a two-stage method with multi-perspective attention mechanism: \textbf{body-part attention} and \textbf{global-local motion-text attention}. The former focuses on the motion embedding perspective, which means introducing a body-part spatio-temporal encoder into VQ-VAE to learn a more expressive discrete latent space. The latter is from the cross-modal perspective, which is used to learn the sentence-level and word-level motion-text cross-modal relationship. The text-driven motion is finally generated with a generative transformer. Extensive experiments conducted on HumanML3D and KIT-ML demonstrate that our method outperforms the current state-of-the-art works in terms of qualitative and quantitative evaluation, and achieve fine-grained synthesis and action2motion. Our code is in https://github.com/ZcyMonkey/AttT2M

PDF Abstract ICCV 2023 PDF ICCV 2023 AbstractCode

Tasks

Datasets

| Task | Dataset | Model | Metric Name | Metric Value | Global Rank | Benchmark |

|---|---|---|---|---|---|---|

| Motion Synthesis | HumanML3D | AttT2M | FID | 0.112 | # 10 | |

| Diversity | 9.700 | # 7 | ||||

| Multimodality | 2.452 | # 5 | ||||

| R Precision Top3 | 0.786 | # 9 | ||||

| Motion Synthesis | KIT Motion-Language | AttT2M | FID | 0.870 | # 16 | |

| R Precision Top3 | 0.751 | # 7 | ||||

| Diversity | 10.96 | # 4 | ||||

| Multimodality | 2.281 | # 5 |

HumanML3D

HumanML3D