Discriminators

Discriminators

PatchGAN

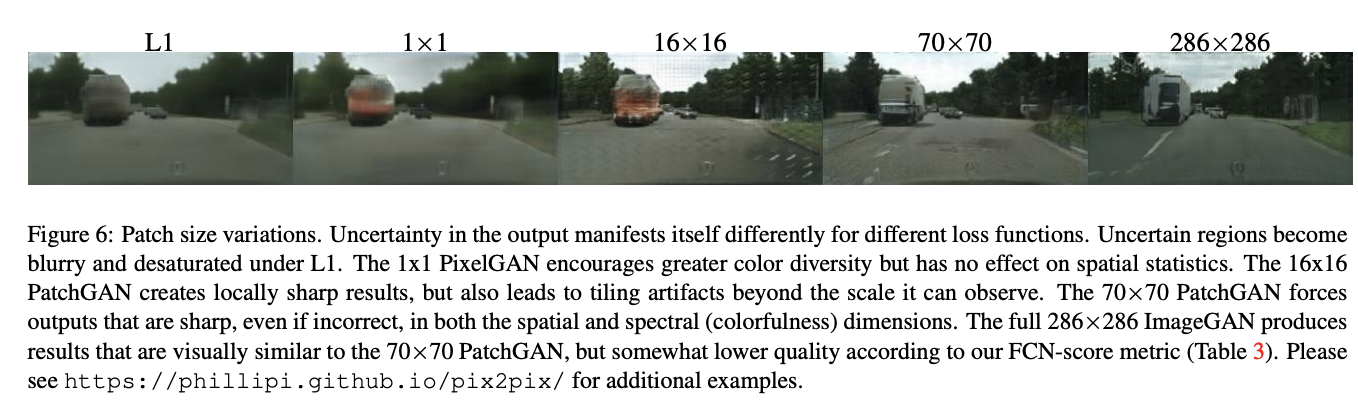

Introduced by Isola et al. in Image-to-Image Translation with Conditional Adversarial NetworksPatchGAN is a type of discriminator for generative adversarial networks which only penalizes structure at the scale of local image patches. The PatchGAN discriminator tries to classify if each $N \times N$ patch in an image is real or fake. This discriminator is run convolutionally across the image, averaging all responses to provide the ultimate output of $D$. Such a discriminator effectively models the image as a Markov random field, assuming independence between pixels separated by more than a patch diameter. It can be understood as a type of texture/style loss.

Source: Image-to-Image Translation with Conditional Adversarial NetworksPapers

| Paper | Code | Results | Date | Stars |

|---|

Tasks

| Task | Papers | Share |

|---|---|---|

| Translation | 118 | 14.57% |

| Image-to-Image Translation | 101 | 12.47% |

| Image Generation | 50 | 6.17% |

| Domain Adaptation | 33 | 4.07% |

| Semantic Segmentation | 28 | 3.46% |

| Style Transfer | 27 | 3.33% |

| Super-Resolution | 14 | 1.73% |

| Denoising | 13 | 1.60% |

| Object Detection | 11 | 1.36% |

Usage Over Time

Components

| Component | Type |

|

|---|---|---|

| 🤖 No Components Found | You can add them if they exist; e.g. Mask R-CNN uses RoIAlign |