Search Results for author: Shikui Wei

Found 15 papers, 5 papers with code

Frequency-Aware Deepfake Detection: Improving Generalizability through Frequency Space Learning

1 code implementation • 12 Mar 2024 • Chuangchuang Tan, Yao Zhao, Shikui Wei, Guanghua Gu, Ping Liu, Yunchao Wei

Consequently, these detectors have exhibited a lack of proficiency in learning the frequency domain and tend to overfit to the artifacts present in the training data, leading to suboptimal performance on unseen sources.

Learning Invariant Inter-pixel Correlations for Superpixel Generation

1 code implementation • 28 Feb 2024 • Sen Xu, Shikui Wei, Tao Ruan, Lixin Liao

To address this issue, we propose the Content Disentangle Superpixel (CDS) algorithm to selectively separate the invariant inter-pixel correlations and statistical properties, i. e., style noise.

Structure-Preserving Physics-Informed Neural Networks With Energy or Lyapunov Structure

no code implementations • 10 Jan 2024 • Haoyu Chu, Yuto Miyatake, Wenjun Cui, Shikui Wei, Daisuke Furihata

Experimental results demonstrate that the proposed method improves the numerical accuracy of PINNs for partial differential equations.

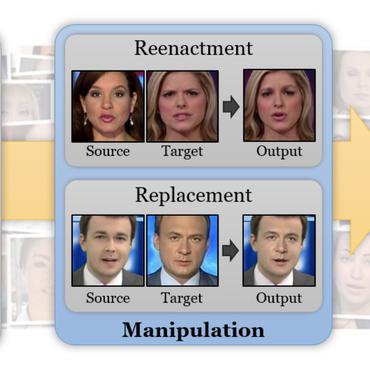

Rethinking the Up-Sampling Operations in CNN-based Generative Network for Generalizable Deepfake Detection

2 code implementations • 16 Dec 2023 • Chuangchuang Tan, Huan Liu, Yao Zhao, Shikui Wei, Guanghua Gu, Ping Liu, Yunchao Wei

Recently, the proliferation of highly realistic synthetic images, facilitated through a variety of GANs and Diffusions, has significantly heightened the susceptibility to misuse.

Lyapunov-Stable Deep Equilibrium Models

no code implementations • 25 Apr 2023 • Haoyu Chu, Shikui Wei, Ting Liu, Yao Zhao, Yuto Miyatake

Deep equilibrium (DEQ) models have emerged as a promising class of implicit layer models, which abandon traditional depth by solving for the fixed points of a single nonlinear layer.

Learning on Gradients: Generalized Artifacts Representation for GAN-Generated Images Detection

1 code implementation • CVPR 2023 • Chuangchuang Tan, Yao Zhao, Shikui Wei, Guanghua Gu, Yunchao Wei

The key of fake image detection is to develop a generalized representation to describe the artifacts produced by generation models.

Improving Neural ODEs via Knowledge Distillation

no code implementations • 10 Mar 2022 • Haoyu Chu, Shikui Wei, Qiming Lu, Yao Zhao

We propose a new training based on knowledge distillation to construct more powerful and robust Neural ODEs fitting image recognition tasks.

Towards Natural Robustness Against Adversarial Examples

no code implementations • 4 Dec 2020 • Haoyu Chu, Shikui Wei, Yao Zhao

Thus, Neural ODEs have natural robustness against adversarial examples.

LID 2020: The Learning from Imperfect Data Challenge Results

no code implementations • 17 Oct 2020 • Yunchao Wei, Shuai Zheng, Ming-Ming Cheng, Hang Zhao, LiWei Wang, Errui Ding, Yi Yang, Antonio Torralba, Ting Liu, Guolei Sun, Wenguan Wang, Luc van Gool, Wonho Bae, Junhyug Noh, Jinhwan Seo, Gunhee Kim, Hao Zhao, Ming Lu, Anbang Yao, Yiwen Guo, Yurong Chen, Li Zhang, Chuangchuang Tan, Tao Ruan, Guanghua Gu, Shikui Wei, Yao Zhao, Mariia Dobko, Ostap Viniavskyi, Oles Dobosevych, Zhendong Wang, Zhenyuan Chen, Chen Gong, Huanqing Yan, Jun He

The purpose of the Learning from Imperfect Data (LID) workshop is to inspire and facilitate the research in developing novel approaches that would harness the imperfect data and improve the data-efficiency during training.

Referring Image Segmentation by Generative Adversarial Learning

no code implementations • IEEE 2020 • Shuang Qiu, Yao Zhao, Jianbo Jiao, Yunchao Wei, Shikui Wei

To this end, we propose to train the referring image segmentation model in a generative adversarial fashion, which well addresses the distribution similarity problem.

Devil in the Details: Towards Accurate Single and Multiple Human Parsing

2 code implementations • 17 Sep 2018 • Tao Ruan, Ting Liu, Zilong Huang, Yunchao Wei, Shikui Wei, Yao Zhao, Thomas Huang

Human parsing has received considerable interest due to its wide application potentials.

Ranked #2 on

Person Re-Identification

on Market-1501-C

Ranked #2 on

Person Re-Identification

on Market-1501-C

A New Evaluation Protocol and Benchmarking Results for Extendable Cross-media Retrieval

no code implementations • 10 Mar 2017 • Ruoyu Liu, Yao Zhao, Liang Zheng, Shikui Wei, Yi Yang

Additionally, a trivial solution, \ie, directly using the predicted class label for cross-media retrieval, is tested.

Camera Fingerprint: A New Perspective for Identifying User's Identity

no code implementations • 25 Oct 2016 • Xiang Jiang, Shikui Wei, Ruizhen Zhao, Yao Zhao, Xindong Wu

The underlying assumption is that multiple accounts belonging to the same person contain the same or similar camera fingerprint information.

Indexing of CNN Features for Large Scale Image Search

no code implementations • 2 Aug 2015 • Ruoyu Liu, Yao Zhao, Shikui Wei, Yi Yang

The convolutional neural network (CNN) features can give a good description of image content, which usually represent images with unique global vectors.

Modality-dependent Cross-media Retrieval

no code implementations • 22 Jun 2015 • Yunchao Wei, Yao Zhao, Zhenfeng Zhu, Shikui Wei, Yanhui Xiao, Jiashi Feng, Shuicheng Yan

Specifically, by jointly optimizing the correlation between images and text and the linear regression from one modal space (image or text) to the semantic space, two couples of mappings are learned to project images and text from their original feature spaces into two common latent subspaces (one for I2T and the other for T2I).