Search Results for author: Lewei Yao

Found 14 papers, 6 papers with code

DetCLIPv3: Towards Versatile Generative Open-vocabulary Object Detection

no code implementations • 14 Apr 2024 • Lewei Yao, Renjie Pi, Jianhua Han, Xiaodan Liang, Hang Xu, Wei zhang, Zhenguo Li, Dan Xu

This is followed by a fine-tuning stage that leverages a small number of high-resolution samples to further enhance detection performance.

Ranked #2 on

Object Detection

on ODinW Full-Shot 13 Tasks

PixArt-Σ: Weak-to-Strong Training of Diffusion Transformer for 4K Text-to-Image Generation

no code implementations • 7 Mar 2024 • Junsong Chen, Chongjian Ge, Enze Xie, Yue Wu, Lewei Yao, Xiaozhe Ren, Zhongdao Wang, Ping Luo, Huchuan Lu, Zhenguo Li

In this paper, we introduce PixArt-\Sigma, a Diffusion Transformer model~(DiT) capable of directly generating images at 4K resolution.

PerceptionGPT: Effectively Fusing Visual Perception into LLM

no code implementations • 11 Nov 2023 • Renjie Pi, Lewei Yao, Jiahui Gao, Jipeng Zhang, Tong Zhang

In this paper, we present a novel end-to-end framework named PerceptionGPT, which efficiently and effectively equips the VLLMs with visual perception abilities by leveraging the representation power of LLMs' token embedding.

PixArt-$α$: Fast Training of Diffusion Transformer for Photorealistic Text-to-Image Synthesis

2 code implementations • 30 Sep 2023 • Junsong Chen, Jincheng Yu, Chongjian Ge, Lewei Yao, Enze Xie, Yue Wu, Zhongdao Wang, James Kwok, Ping Luo, Huchuan Lu, Zhenguo Li

We hope PIXART-$\alpha$ will provide new insights to the AIGC community and startups to accelerate building their own high-quality yet low-cost generative models from scratch.

DiT-3D: Exploring Plain Diffusion Transformers for 3D Shape Generation

1 code implementation • NeurIPS 2023 • Shentong Mo, Enze Xie, Ruihang Chu, Lewei Yao, Lanqing Hong, Matthias Nießner, Zhenguo Li

Recent Diffusion Transformers (e. g., DiT) have demonstrated their powerful effectiveness in generating high-quality 2D images.

Ranked #1 on

Point Cloud Generation

on ShapeNet Car

Ranked #1 on

Point Cloud Generation

on ShapeNet Car

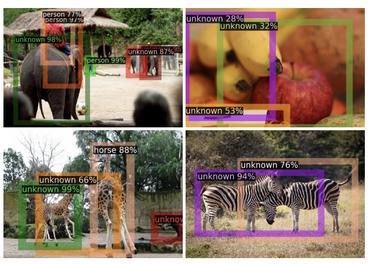

DetGPT: Detect What You Need via Reasoning

1 code implementation • 23 May 2023 • Renjie Pi, Jiahui Gao, Shizhe Diao, Rui Pan, Hanze Dong, Jipeng Zhang, Lewei Yao, Jianhua Han, Hang Xu, Lingpeng Kong, Tong Zhang

Overall, our proposed paradigm and DetGPT demonstrate the potential for more sophisticated and intuitive interactions between humans and machines.

DiffFit: Unlocking Transferability of Large Diffusion Models via Simple Parameter-Efficient Fine-Tuning

1 code implementation • ICCV 2023 • Enze Xie, Lewei Yao, Han Shi, Zhili Liu, Daquan Zhou, Zhaoqiang Liu, Jiawei Li, Zhenguo Li

This paper proposes DiffFit, a parameter-efficient strategy to fine-tune large pre-trained diffusion models that enable fast adaptation to new domains.

DetCLIPv2: Scalable Open-Vocabulary Object Detection Pre-training via Word-Region Alignment

no code implementations • CVPR 2023 • Lewei Yao, Jianhua Han, Xiaodan Liang, Dan Xu, Wei zhang, Zhenguo Li, Hang Xu

This paper presents DetCLIPv2, an efficient and scalable training framework that incorporates large-scale image-text pairs to achieve open-vocabulary object detection (OVD).

Ranked #5 on

Object Detection

on ODinW Full-Shot 13 Tasks

DetCLIP: Dictionary-Enriched Visual-Concept Paralleled Pre-training for Open-world Detection

no code implementations • 20 Sep 2022 • Lewei Yao, Jianhua Han, Youpeng Wen, Xiaodan Liang, Dan Xu, Wei zhang, Zhenguo Li, Chunjing Xu, Hang Xu

We further design a concept dictionary~(with descriptions) from various online sources and detection datasets to provide prior knowledge for each concept.

Wukong: A 100 Million Large-scale Chinese Cross-modal Pre-training Benchmark

1 code implementation • 14 Feb 2022 • Jiaxi Gu, Xiaojun Meng, Guansong Lu, Lu Hou, Minzhe Niu, Xiaodan Liang, Lewei Yao, Runhui Huang, Wei zhang, Xin Jiang, Chunjing Xu, Hang Xu

Experiments show that Wukong can serve as a promising Chinese pre-training dataset and benchmark for different cross-modal learning methods.

Ranked #6 on

Image Retrieval

on MUGE Retrieval

Ranked #6 on

Image Retrieval

on MUGE Retrieval

FILIP: Fine-grained Interactive Language-Image Pre-Training

1 code implementation • ICLR 2022 • Lewei Yao, Runhui Huang, Lu Hou, Guansong Lu, Minzhe Niu, Hang Xu, Xiaodan Liang, Zhenguo Li, Xin Jiang, Chunjing Xu

In this paper, we introduce a large-scale Fine-grained Interactive Language-Image Pre-training (FILIP) to achieve finer-level alignment through a cross-modal late interaction mechanism, which uses a token-wise maximum similarity between visual and textual tokens to guide the contrastive objective.

G-DetKD: Towards General Distillation Framework for Object Detectors via Contrastive and Semantic-guided Feature Imitation

no code implementations • ICCV 2021 • Lewei Yao, Renjie Pi, Hang Xu, Wei zhang, Zhenguo Li, Tong Zhang

In this paper, we investigate the knowledge distillation (KD) strategy for object detection and propose an effective framework applicable to both homogeneous and heterogeneous student-teacher pairs.

Joint-DetNAS: Upgrade Your Detector with NAS, Pruning and Dynamic Distillation

no code implementations • CVPR 2021 • Lewei Yao, Renjie Pi, Hang Xu, Wei zhang, Zhenguo Li, Tong Zhang

For student morphism, weight inheritance strategy is adopted, allowing the student to flexibly update its architecture while fully utilize the predecessor's weights, which considerably accelerates the search; To facilitate dynamic distillation, an elastic teacher pool is trained via integrated progressive shrinking strategy, from which teacher detectors can be sampled without additional cost in subsequent searches.

SM-NAS: Structural-to-Modular Neural Architecture Search for Object Detection

no code implementations • 22 Nov 2019 • Lewei Yao, Hang Xu, Wei zhang, Xiaodan Liang, Zhenguo Li

In this paper, we present a two-stage coarse-to-fine searching strategy named Structural-to-Modular NAS (SM-NAS) for searching a GPU-friendly design of both an efficient combination of modules and better modular-level architecture for object detection.