Search Results for author: Deng-Ping Fan

Found 84 papers, 75 papers with code

BBS-Net: RGB-D Salient Object Detection with a Bifurcated Backbone Strategy Network

1 code implementation • ECCV 2020 • Deng-Ping Fan, Yingjie Zhai, Ali Borji, Jufeng Yang, Ling Shao

In particular, we 1) propose a bifurcated backbone strategy (BBS) to split the multi-level features into teacher and student features, and 2) utilize a depth-enhanced module (DEM) to excavate informative parts of depth cues from the channel and spatial views.

Minimize Quantization Output Error with Bias Compensation

1 code implementation • 2 Apr 2024 • Cheng Gong, Haoshuai Zheng, Mengting Hu, Zheng Lin, Deng-Ping Fan, Yuzhi Zhang, Tao Li

Quantization is a promising method that reduces memory usage and computational intensity of Deep Neural Networks (DNNs), but it often leads to significant output error that hinder model deployment.

LAKE-RED: Camouflaged Images Generation by Latent Background Knowledge Retrieval-Augmented Diffusion

1 code implementation • 30 Mar 2024 • Pancheng Zhao, Peng Xu, Pengda Qin, Deng-Ping Fan, Zhicheng Zhang, Guoli Jia, BoWen Zhou, Jufeng Yang

Camouflaged vision perception is an important vision task with numerous practical applications.

Latent Semantic Consensus For Deterministic Geometric Model Fitting

1 code implementation • 11 Mar 2024 • Guobao Xiao, Jun Yu, Jiayi Ma, Deng-Ping Fan, Ling Shao

The principle of LSC is to preserve the latent semantic consensus in both data points and model hypotheses.

Effectiveness Assessment of Recent Large Vision-Language Models

no code implementations • 7 Mar 2024 • Yao Jiang, Xinyu Yan, Ge-Peng Ji, Keren Fu, Meijun Sun, Huan Xiong, Deng-Ping Fan, Fahad Shahbaz Khan

This paper endeavors to evaluate the competency of popular LVLMs in specialized and general tasks, respectively, aiming to offer a comprehensive understanding of these novel models.

Vanishing-Point-Guided Video Semantic Segmentation of Driving Scenes

1 code implementation • 27 Jan 2024 • Diandian Guo, Deng-Ping Fan, Tongyu Lu, Christos Sakaridis, Luc van Gool

The estimation of implicit cross-frame correspondences and the high computational cost have long been major challenges in video semantic segmentation (VSS) for driving scenes.

Bilateral Reference for High-Resolution Dichotomous Image Segmentation

1 code implementation • 7 Jan 2024 • Peng Zheng, Dehong Gao, Deng-Ping Fan, Li Liu, Jorma Laaksonen, Wanli Ouyang, Nicu Sebe

It comprises two essential components: the localization module (LM) and the reconstruction module (RM) with our proposed bilateral reference (BiRef).

Ranked #1 on

Camouflaged Object Segmentation

on COD

Ranked #1 on

Camouflaged Object Segmentation

on COD

Camouflaged Object Segmentation

Camouflaged Object Segmentation

Dichotomous Image Segmentation

+3

Dichotomous Image Segmentation

+3

BA-SAM: Scalable Bias-Mode Attention Mask for Segment Anything Model

1 code implementation • 4 Jan 2024 • Yiran Song, Qianyu Zhou, Xiangtai Li, Deng-Ping Fan, Xuequan Lu, Lizhuang Ma

To this end, we propose Scalable Bias-Mode Attention Mask (BA-SAM) to enhance SAM's adaptability to varying image resolutions while eliminating the need for structure modifications.

Large Model Based Referring Camouflaged Object Detection

no code implementations • 28 Nov 2023 • Shupeng Cheng, Ge-Peng Ji, Pengda Qin, Deng-Ping Fan, BoWen Zhou, Peng Xu

Our motivation is to make full use of the semantic intelligence and intrinsic knowledge of recent Multimodal Large Language Models (MLLMs) to decompose this complex task in a human-like way.

VSCode: General Visual Salient and Camouflaged Object Detection with 2D Prompt Learning

1 code implementation • 25 Nov 2023 • Ziyang Luo, Nian Liu, Wangbo Zhao, Xuguang Yang, Dingwen Zhang, Deng-Ping Fan, Fahad Khan, Junwei Han

Salient object detection (SOD) and camouflaged object detection (COD) are related yet distinct binary mapping tasks.

Acquiring Weak Annotations for Tumor Localization in Temporal and Volumetric Data

1 code implementation • 23 Oct 2023 • Yu-Cheng Chou, Bowen Li, Deng-Ping Fan, Alan Yuille, Zongwei Zhou

In summary, this research proposes an efficient annotation strategy for tumor detection and localization that is less accurate than per-pixel annotations but useful for creating large-scale datasets for screening tumors in various medical modalities.

Edge-aware Feature Aggregation Network for Polyp Segmentation

no code implementations • 19 Sep 2023 • Tao Zhou, Yizhe Zhang, Geng Chen, Yi Zhou, Ye Wu, Deng-Ping Fan

Besides, a Scale-aware Convolution Module (SCM) is proposed to learn scale-aware features by using dilated convolutions with different ratios, in order to effectively deal with scale variation.

OnUVS: Online Feature Decoupling Framework for High-Fidelity Ultrasound Video Synthesis

no code implementations • 16 Aug 2023 • Han Zhou, Dong Ni, Ao Chang, Xinrui Zhou, Rusi Chen, Yanlin Chen, Lian Liu, Jiamin Liang, Yuhao Huang, Tong Han, Zhe Liu, Deng-Ping Fan, Xin Yang

Second, to better preserve the integrity and textural information of US images, we implemented a dual-decoder that decouples the content and textural features in the generator.

Validating polyp and instrument segmentation methods in colonoscopy through Medico 2020 and MedAI 2021 Challenges

1 code implementation • 30 Jul 2023 • Debesh Jha, Vanshali Sharma, Debapriya Banik, Debayan Bhattacharya, Kaushiki Roy, Steven A. Hicks, Nikhil Kumar Tomar, Vajira Thambawita, Adrian Krenzer, Ge-Peng Ji, Sahadev Poudel, George Batchkala, Saruar Alam, Awadelrahman M. A. Ahmed, Quoc-Huy Trinh, Zeshan Khan, Tien-Phat Nguyen, Shruti Shrestha, Sabari Nathan, Jeonghwan Gwak, Ritika K. Jha, Zheyuan Zhang, Alexander Schlaefer, Debotosh Bhattacharjee, M. K. Bhuyan, Pradip K. Das, Deng-Ping Fan, Sravanthi Parsa, Sharib Ali, Michael A. Riegler, Pål Halvorsen, Thomas de Lange, Ulas Bagci

Automatic analysis of colonoscopy images has been an active field of research motivated by the importance of early detection of precancerous polyps.

How Good is Google Bard's Visual Understanding? An Empirical Study on Open Challenges

1 code implementation • 27 Jul 2023 • Haotong Qin, Ge-Peng Ji, Salman Khan, Deng-Ping Fan, Fahad Shahbaz Khan, Luc van Gool

Google's Bard has emerged as a formidable competitor to OpenAI's ChatGPT in the field of conversational AI.

CalibNet: Dual-branch Cross-modal Calibration for RGB-D Salient Instance Segmentation

1 code implementation • 16 Jul 2023 • Jialun Pei, Tao Jiang, He Tang, Nian Liu, Yueming Jin, Deng-Ping Fan, Pheng-Ann Heng

We propose a novel approach for RGB-D salient instance segmentation using a dual-branch cross-modal feature calibration architecture called CalibNet.

Referring Camouflaged Object Detection

1 code implementation • 13 Jun 2023 • Xuying Zhang, Bowen Yin, Zheng Lin, Qibin Hou, Deng-Ping Fan, Ming-Ming Cheng

We consider the problem of referring camouflaged object detection (Ref-COD), a new task that aims to segment specified camouflaged objects based on a small set of referring images with salient target objects.

Instructive Feature Enhancement for Dichotomous Medical Image Segmentation

1 code implementation • 6 Jun 2023 • Lian Liu, Han Zhou, Jiongquan Chen, Sijing Liu, Wenlong Shi, Dong Ni, Deng-Ping Fan, Xin Yang

Deep neural networks have been widely applied in dichotomous medical image segmentation (DMIS) of many anatomical structures in several modalities, achieving promising performance.

Mindstorms in Natural Language-Based Societies of Mind

no code implementations • 26 May 2023 • Mingchen Zhuge, Haozhe Liu, Francesco Faccio, Dylan R. Ashley, Róbert Csordás, Anand Gopalakrishnan, Abdullah Hamdi, Hasan Abed Al Kader Hammoud, Vincent Herrmann, Kazuki Irie, Louis Kirsch, Bing Li, Guohao Li, Shuming Liu, Jinjie Mai, Piotr Piękos, Aditya Ramesh, Imanol Schlag, Weimin Shi, Aleksandar Stanić, Wenyi Wang, Yuhui Wang, Mengmeng Xu, Deng-Ping Fan, Bernard Ghanem, Jürgen Schmidhuber

What should be the social structure of an NLSOM?

WinDB: HMD-free and Distortion-free Panoptic Video Fixation Learning

2 code implementations • 23 May 2023 • Guotao Wang, Chenglizhao Chen, Aimin Hao, Hong Qin, Deng-Ping Fan

The main reason is that there always exist "blind zooms" when using HMD to collect fixations since the users cannot keep spinning their heads to explore the entire panoptic scene all the time.

Segment Anything Model for Medical Images?

1 code implementation • 28 Apr 2023 • Yuhao Huang, Xin Yang, Lian Liu, Han Zhou, Ao Chang, Xinrui Zhou, Rusi Chen, Junxuan Yu, Jiongquan Chen, Chaoyu Chen, Sijing Liu, Haozhe Chi, Xindi Hu, Kejuan Yue, Lei LI, Vicente Grau, Deng-Ping Fan, Fajin Dong, Dong Ni

To fully validate SAM's performance on medical data, we collected and sorted 53 open-source datasets and built a large medical segmentation dataset with 18 modalities, 84 objects, 125 object-modality paired targets, 1050K 2D images, and 6033K masks.

Indiscernible Object Counting in Underwater Scenes

1 code implementation • CVPR 2023 • Guolei Sun, Zhaochong An, Yun Liu, Ce Liu, Christos Sakaridis, Deng-Ping Fan, Luc van Gool

We further advance the frontier of this field by systematically studying a new challenge named indiscernible object counting (IOC), the goal of which is to count objects that are blended with respect to their surroundings.

Advances in Deep Concealed Scene Understanding

1 code implementation • 21 Apr 2023 • Deng-Ping Fan, Ge-Peng Ji, Peng Xu, Ming-Ming Cheng, Christos Sakaridis, Luc van Gool

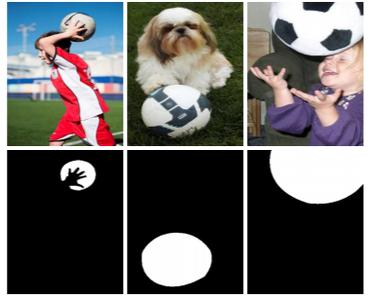

Concealed scene understanding (CSU) is a hot computer vision topic aiming to perceive objects exhibiting camouflage.

SAM Struggles in Concealed Scenes -- Empirical Study on "Segment Anything"

no code implementations • 12 Apr 2023 • Ge-Peng Ji, Deng-Ping Fan, Peng Xu, Ming-Ming Cheng, BoWen Zhou, Luc van Gool

Segmenting anything is a ground-breaking step toward artificial general intelligence, and the Segment Anything Model (SAM) greatly fosters the foundation models for computer vision.

CamDiff: Camouflage Image Augmentation via Diffusion Model

1 code implementation • 11 Apr 2023 • Xue-Jing Luo, Shuo Wang, Zongwei Wu, Christos Sakaridis, Yun Cheng, Deng-Ping Fan, Luc van Gool

Specifically, we leverage the latent diffusion model to synthesize salient objects in camouflaged scenes, while using the zero-shot image classification ability of the Contrastive Language-Image Pre-training (CLIP) model to prevent synthesis failures and ensure the synthesized object aligns with the input prompt.

QR-CLIP: Introducing Explicit Open-World Knowledge for Location and Time Reasoning

no code implementations • 2 Feb 2023 • Weimin Shi, Mingchen Zhuge, Dehong Gao, Zhong Zhou, Ming-Ming Cheng, Deng-Ping Fan

Daily images may convey abstract meanings that require us to memorize and infer profound information from them.

Source-free Depth for Object Pop-out

1 code implementation • ICCV 2023 • Zongwei Wu, Danda Pani Paudel, Deng-Ping Fan, Jingjing Wang, Shuo Wang, Cédric Demonceaux, Radu Timofte, Luc van Gool

In this work, we adapt such depth inference models for object segmentation using the objects' "pop-out" prior in 3D.

CamoFormer: Masked Separable Attention for Camouflaged Object Detection

1 code implementation • 10 Dec 2022 • Bowen Yin, Xuying Zhang, Qibin Hou, Bo-Yuan Sun, Deng-Ping Fan, Luc van Gool

How to identify and segment camouflaged objects from the background is challenging.

Masked Vision-Language Transformer in Fashion

1 code implementation • 27 Oct 2022 • Ge-Peng Ji, Mingcheng Zhuge, Dehong Gao, Deng-Ping Fan, Christos Sakaridis, Luc van Gool

We present a masked vision-language transformer (MVLT) for fashion-specific multi-modal representation.

OSFormer: One-Stage Camouflaged Instance Segmentation with Transformers

1 code implementation • 5 Jul 2022 • Jialun Pei, Tianyang Cheng, Deng-Ping Fan, He Tang, Chuanbo Chen, Luc van Gool

We present OSFormer, the first one-stage transformer framework for camouflaged instance segmentation (CIS).

GCoNet+: A Stronger Group Collaborative Co-Salient Object Detector

2 code implementations • 30 May 2022 • Peng Zheng, Huazhu Fu, Deng-Ping Fan, Qi Fan, Jie Qin, Yu-Wing Tai, Chi-Keung Tang, Luc van Gool

In this paper, we present a novel end-to-end group collaborative learning network, termed GCoNet+, which can effectively and efficiently (250 fps) identify co-salient objects in natural scenes.

Ranked #1 on

Co-Salient Object Detection

on CoCA

Ranked #1 on

Co-Salient Object Detection

on CoCA

Deep Gradient Learning for Efficient Camouflaged Object Detection

1 code implementation • 25 May 2022 • Ge-Peng Ji, Deng-Ping Fan, Yu-Cheng Chou, Dengxin Dai, Alexander Liniger, Luc van Gool

This paper introduces DGNet, a novel deep framework that exploits object gradient supervision for camouflaged object detection (COD).

Towards Deeper Understanding of Camouflaged Object Detection

1 code implementation • 23 May 2022 • Yunqiu Lv, Jing Zhang, Yuchao Dai, Aixuan Li, Nick Barnes, Deng-Ping Fan

With the above understanding about camouflaged objects, we present the first triple-task learning framework to simultaneously localize, segment, and rank camouflaged objects, indicating the conspicuousness level of camouflage.

Video Polyp Segmentation: A Deep Learning Perspective

4 code implementations • 27 Mar 2022 • Ge-Peng Ji, Guobao Xiao, Yu-Cheng Chou, Deng-Ping Fan, Kai Zhao, Geng Chen, Luc van Gool

We present the first comprehensive video polyp segmentation (VPS) study in the deep learning era.

Ranked #2 on

Video Polyp Segmentation

on SUN-SEG-Easy (Unseen)

Ranked #2 on

Video Polyp Segmentation

on SUN-SEG-Easy (Unseen)

Practical Blind Image Denoising via Swin-Conv-UNet and Data Synthesis

2 code implementations • 24 Mar 2022 • Kai Zhang, Yawei Li, Jingyun Liang, JieZhang Cao, Yulun Zhang, Hao Tang, Deng-Ping Fan, Radu Timofte, Luc van Gool

While recent years have witnessed a dramatic upsurge of exploiting deep neural networks toward solving image denoising, existing methods mostly rely on simple noise assumptions, such as additive white Gaussian noise (AWGN), JPEG compression noise and camera sensor noise, and a general-purpose blind denoising method for real images remains unsolved.

Ranked #1 on

Image Denoising

on urban100 sigma15

Ranked #1 on

Image Denoising

on urban100 sigma15

Implicit Motion Handling for Video Camouflaged Object Detection

1 code implementation • CVPR 2022 • Xuelian Cheng, Huan Xiong, Deng-Ping Fan, Yiran Zhong, Mehrtash Harandi, Tom Drummond, ZongYuan Ge

We propose a new video camouflaged object detection (VCOD) framework that can exploit both short-term dynamics and long-term temporal consistency to detect camouflaged objects from video frames.

Ranked #2 on

Camouflaged Object Segmentation

on Camouflaged Animal Dataset

(using extra training data)

Ranked #2 on

Camouflaged Object Segmentation

on Camouflaged Animal Dataset

(using extra training data)

Highly Accurate Dichotomous Image Segmentation

1 code implementation • 6 Mar 2022 • Xuebin Qin, Hang Dai, Xiaobin Hu, Deng-Ping Fan, Ling Shao, and Luc Van Gool

We present a systematic study on a new task called dichotomous image segmentation (DIS) , which aims to segment highly accurate objects from natural images.

Ranked #5 on

Dichotomous Image Segmentation

on DIS-TE1

Ranked #5 on

Dichotomous Image Segmentation

on DIS-TE1

Facial-Sketch Synthesis: A New Challenge

3 code implementations • 31 Dec 2021 • Deng-Ping Fan, Ziling Huang, Peng Zheng, Hong Liu, Xuebin Qin, Luc van Gool

Besides, we elaborate comprehensive experiments on the existing 19 cutting-edge models.

Weakly Supervised Visual-Auditory Fixation Prediction with Multigranularity Perception

1 code implementation • 27 Dec 2021 • Guotao Wang, Chenglizhao Chen, Deng-Ping Fan, Aimin Hao, Hong Qin

Moreover, we distill knowledge from these regions to obtain complete new spatial-temporal-audio (STA) fixation prediction (FP) networks, enabling broad applications in cases where video tags are not available.

Dense Uncertainty Estimation

1 code implementation • 13 Oct 2021 • Jing Zhang, Yuchao Dai, Mochu Xiang, Deng-Ping Fan, Peyman Moghadam, Mingyi He, Christian Walder, Kaihao Zhang, Mehrtash Harandi, Nick Barnes

Deep neural networks can be roughly divided into deterministic neural networks and stochastic neural networks. The former is usually trained to achieve a mapping from input space to output space via maximum likelihood estimation for the weights, which leads to deterministic predictions during testing.

IDENTIFYING CONCEALED OBJECTS FROM VIDEOS

no code implementations • 29 Sep 2021 • Xuelian Cheng, Huan Xiong, Deng-Ping Fan, Yiran Zhong, Mehrtash Harandi, Tom Drummond, ZongYuan Ge

The proposed SLT-Net leverages on both short-term dynamics and long-term temporal consistency to detect concealed objects in continuous video frames.

RGB-D Saliency Detection via Cascaded Mutual Information Minimization

1 code implementation • ICCV 2021 • Jing Zhang, Deng-Ping Fan, Yuchao Dai, Xin Yu, Yiran Zhong, Nick Barnes, Ling Shao

In this paper, we introduce a novel multi-stage cascaded learning framework via mutual information minimization to "explicitly" model the multi-modal information between RGB image and depth data.

Specificity-preserving RGB-D Saliency Detection

3 code implementations • ICCV 2021 • Tao Zhou, Deng-Ping Fan, Geng Chen, Yi Zhou, Huazhu Fu

To effectively fuse cross-modal features in the shared learning network, we propose a cross-enhanced integration module (CIM) and then propagate the fused feature to the next layer for integrating cross-level information.

Polyp-PVT: Polyp Segmentation with Pyramid Vision Transformers

2 code implementations • 16 Aug 2021 • Bo Dong, Wenhai Wang, Deng-Ping Fan, Jinpeng Li, Huazhu Fu, Ling Shao

Unlike existing CNN-based methods, we adopt a transformer encoder, which learns more powerful and robust representations.

Ranked #9 on

Medical Image Segmentation

on CVC-ColonDB

Ranked #9 on

Medical Image Segmentation

on CVC-ColonDB

Full-Duplex Strategy for Video Object Segmentation

1 code implementation • ICCV 2021 • Ge-Peng Ji, Deng-Ping Fan, Keren Fu, Zhe Wu, Jianbing Shen, Ling Shao

Previous video object segmentation approaches mainly focus on using simplex solutions between appearance and motion, limiting feature collaboration efficiency among and across these two cues.

Ranked #7 on

Video Polyp Segmentation

on SUN-SEG-Hard (Unseen)

Ranked #7 on

Video Polyp Segmentation

on SUN-SEG-Hard (Unseen)

PVT v2: Improved Baselines with Pyramid Vision Transformer

16 code implementations • 25 Jun 2021 • Wenhai Wang, Enze Xie, Xiang Li, Deng-Ping Fan, Kaitao Song, Ding Liang, Tong Lu, Ping Luo, Ling Shao

We hope this work will facilitate state-of-the-art Transformer researches in computer vision.

Ranked #23 on

Object Detection

on COCO-O

Ranked #23 on

Object Detection

on COCO-O

Probabilistic Model Distillation for Semantic Correspondence

1 code implementation • CVPR 2021 • Xin Li, Deng-Ping Fan, Fan Yang, Ao Luo, Hong Cheng, Zicheng Liu

We address this problem with the use of a novel Probabilistic Model Distillation (PMD) approach which transfers knowledge learned by a probabilistic teacher model on synthetic data to a static student model with the use of unlabeled real image pairs.

From Semantic Categories to Fixations: A Novel Weakly-Supervised Visual-Auditory Saliency Detection Approach

1 code implementation • CVPR 2021 • Guotao Wang, Chenglizhao Chen, Deng-Ping Fan, Aimin Hao, Hong Qin

Thanks to the rapid advances in the deep learning techniques and the wide availability of large-scale training sets, the performances of video saliency detection models have been improving steadily and significantly.

Progressively Normalized Self-Attention Network for Video Polyp Segmentation

3 code implementations • 18 May 2021 • Ge-Peng Ji, Yu-Cheng Chou, Deng-Ping Fan, Geng Chen, Huazhu Fu, Debesh Jha, Ling Shao

Existing video polyp segmentation (VPS) models typically employ convolutional neural networks (CNNs) to extract features.

Ranked #6 on

Video Polyp Segmentation

on SUN-SEG-Easy (Unseen)

Ranked #6 on

Video Polyp Segmentation

on SUN-SEG-Easy (Unseen)

Salient Objects in Clutter

2 code implementations • 7 May 2021 • Deng-Ping Fan, Jing Zhang, Gang Xu, Ming-Ming Cheng, Ling Shao

This design bias has led to a saturation in performance for state-of-the-art SOD models when evaluated on existing datasets.

Camouflaged Object Segmentation with Distraction Mining

1 code implementation • CVPR 2021 • Haiyang Mei, Ge-Peng Ji, Ziqi Wei, Xin Yang, Xiaopeng Wei, Deng-Ping Fan

In this paper, we strive to embrace challenges towards effective and efficient COS. To this end, we develop a bio-inspired framework, termed Positioning and Focus Network (PFNet), which mimics the process of predation in nature.

Ranked #12 on

Dichotomous Image Segmentation

on DIS-TE3

Ranked #12 on

Dichotomous Image Segmentation

on DIS-TE3

Camouflaged Object Segmentation

Camouflaged Object Segmentation

Dichotomous Image Segmentation

+3

Dichotomous Image Segmentation

+3

Generative Transformer for Accurate and Reliable Salient Object Detection

2 code implementations • 20 Apr 2021 • Yuxin Mao, Jing Zhang, Zhexiong Wan, Yuchao Dai, Aixuan Li, Yunqiu Lv, Xinyu Tian, Deng-Ping Fan, Nick Barnes

For the former, we apply transformer to a deterministic model, and explain that the effective structure modeling and global context modeling abilities lead to its superior performance compared with the CNN based frameworks.

Mutual Graph Learning for Camouflaged Object Detection

1 code implementation • CVPR 2021 • Qiang Zhai, Xin Li, Fan Yang, Chenglizhao Chen, Hong Cheng, Deng-Ping Fan

Automatically detecting/segmenting object(s) that blend in with their surroundings is difficult for current models.

Kaleido-BERT: Vision-Language Pre-training on Fashion Domain

1 code implementation • CVPR 2021 • Mingchen Zhuge, Dehong Gao, Deng-Ping Fan, Linbo Jin, Ben Chen, Haoming Zhou, Minghui Qiu, Ling Shao

We present a new vision-language (VL) pre-training model dubbed Kaleido-BERT, which introduces a novel kaleido strategy for fashion cross-modality representations from transformers.

Group Collaborative Learning for Co-Salient Object Detection

1 code implementation • CVPR 2021 • Qi Fan, Deng-Ping Fan, Huazhu Fu, Chi Keung Tang, Ling Shao, Yu-Wing Tai

We present a novel group collaborative learning framework (GCoNet) capable of detecting co-salient objects in real time (16ms), by simultaneously mining consensus representations at group level based on the two necessary criteria: 1) intra-group compactness to better formulate the consistency among co-salient objects by capturing their inherent shared attributes using our novel group affinity module; 2) inter-group separability to effectively suppress the influence of noisy objects on the output by introducing our new group collaborating module conditioning the inconsistent consensus.

Ranked #5 on

Co-Salient Object Detection

on CoCA

Ranked #5 on

Co-Salient Object Detection

on CoCA

Simultaneously Localize, Segment and Rank the Camouflaged Objects

1 code implementation • CVPR 2021 • Yunqiu Lv, Jing Zhang, Yuchao Dai, Aixuan Li, Bowen Liu, Nick Barnes, Deng-Ping Fan

With the above understanding about camouflaged objects, we present the first ranking based COD network (Rank-Net) to simultaneously localize, segment and rank camouflaged objects.

Pyramid Vision Transformer: A Versatile Backbone for Dense Prediction without Convolutions

9 code implementations • ICCV 2021 • Wenhai Wang, Enze Xie, Xiang Li, Deng-Ping Fan, Kaitao Song, Ding Liang, Tong Lu, Ping Luo, Ling Shao

Unlike the recently-proposed Transformer model (e. g., ViT) that is specially designed for image classification, we propose Pyramid Vision Transformer~(PVT), which overcomes the difficulties of porting Transformer to various dense prediction tasks.

Ranked #5 on

Semantic Segmentation

on SynPASS

Ranked #5 on

Semantic Segmentation

on SynPASS

Concealed Object Detection

1 code implementation • 20 Feb 2021 • Deng-Ping Fan, Ge-Peng Ji, Ming-Ming Cheng, Ling Shao

We present the first systematic study on concealed object detection (COD), which aims to identify objects that are "perfectly" embedded in their background.

Ranked #5 on

Camouflaged Object Segmentation

on CHAMELEON

Ranked #5 on

Camouflaged Object Segmentation

on CHAMELEON

Camouflaged Object Segmentation

Camouflaged Object Segmentation

Dichotomous Image Segmentation

+2

Dichotomous Image Segmentation

+2

Salient Object Detection via Integrity Learning

3 code implementations • 19 Jan 2021 • Mingchen Zhuge, Deng-Ping Fan, Nian Liu, Dingwen Zhang, Dong Xu, Ling Shao

We define the concept of integrity at both a micro and macro level.

Boundary-Aware Segmentation Network for Mobile and Web Applications

5 code implementations • 12 Jan 2021 • Xuebin Qin, Deng-Ping Fan, Chenyang Huang, Cyril Diagne, Zichen Zhang, Adrià Cabeza Sant'Anna, Albert Suàrez, Martin Jagersand, Ling Shao

In this paper, we propose a simple yet powerful Boundary-Aware Segmentation Network (BASNet), which comprises a predict-refine architecture and a hybrid loss, for highly accurate image segmentation.

Uncertainty-Guided Transformer Reasoning for Camouflaged Object Detection

1 code implementation • ICCV 2021 • Fan Yang, Qiang Zhai, Xin Li, Rui Huang, Ao Luo, Hong Cheng, Deng-Ping Fan

Spotting objects that are visually adapted to their surroundings is challenging for both humans and AI.

Light Field Salient Object Detection: A Review and Benchmark

1 code implementation • 10 Oct 2020 • Keren Fu, Yao Jiang, Ge-Peng Ji, Tao Zhou, Qijun Zhao, Deng-Ping Fan

Secondly, we benchmark nine representative light field SOD models together with several cutting-edge RGB-D SOD models on four widely used light field datasets, from which insightful discussions and analyses, including a comparison between light field SOD and RGB-D SOD models, are achieved.

Uncertainty Inspired RGB-D Saliency Detection

4 code implementations • 7 Sep 2020 • Jing Zhang, Deng-Ping Fan, Yuchao Dai, Saeed Anwar, Fatemeh Saleh, Sadegh Aliakbarian, Nick Barnes

Our framework includes two main models: 1) a generator model, which maps the input image and latent variable to stochastic saliency prediction, and 2) an inference model, which gradually updates the latent variable by sampling it from the true or approximate posterior distribution.

Ranked #1 on

RGB-D Salient Object Detection

on LFSD

Ranked #1 on

RGB-D Salient Object Detection

on LFSD

Siamese Network for RGB-D Salient Object Detection and Beyond

2 code implementations • 26 Aug 2020 • Keren Fu, Deng-Ping Fan, Ge-Peng Ji, Qijun Zhao, Jianbing Shen, Ce Zhu

Inspired by the observation that RGB and depth modalities actually present certain commonality in distinguishing salient objects, a novel joint learning and densely cooperative fusion (JL-DCF) architecture is designed to learn from both RGB and depth inputs through a shared network backbone, known as the Siamese architecture.

Ranked #3 on

RGB-D Salient Object Detection

on STERE

Ranked #3 on

RGB-D Salient Object Detection

on STERE

RGB-D Salient Object Detection: A Survey

9 code implementations • 1 Aug 2020 • Tao Zhou, Deng-Ping Fan, Ming-Ming Cheng, Jianbing Shen, Ling Shao

Further, considering that the light field can also provide depth maps, we review SOD models and popular benchmark datasets from this domain as well.

Re-thinking Co-Salient Object Detection

2 code implementations • 7 Jul 2020 • Deng-Ping Fan, Tengpeng Li, Zheng Lin, Ge-Peng Ji, Dingwen Zhang, Ming-Ming Cheng, Huazhu Fu, Jianbing Shen

CoSOD is an emerging and rapidly growing extension of salient object detection (SOD), which aims to detect the co-occurring salient objects in a group of images.

Ranked #7 on

Co-Salient Object Detection

on CoCA

Ranked #7 on

Co-Salient Object Detection

on CoCA

Bifurcated backbone strategy for RGB-D salient object detection

2 code implementations • 6 Jul 2020 • Yingjie Zhai, Deng-Ping Fan, Jufeng Yang, Ali Borji, Ling Shao, Junwei Han, Liang Wang

In particular, first, we propose to regroup the multi-level features into teacher and student features using a bifurcated backbone strategy (BBS).

Ranked #2 on

RGB-D Salient Object Detection

on RGBD135

Ranked #2 on

RGB-D Salient Object Detection

on RGBD135

PraNet: Parallel Reverse Attention Network for Polyp Segmentation

4 code implementations • 13 Jun 2020 • Deng-Ping Fan, Ge-Peng Ji, Tao Zhou, Geng Chen, Huazhu Fu, Jianbing Shen, Ling Shao

To address these challenges, we propose a parallel reverse attention network (PraNet) for accurate polyp segmentation in colonoscopy images.

Ranked #7 on

Video Polyp Segmentation

on SUN-SEG-Easy (Unseen)

Ranked #7 on

Video Polyp Segmentation

on SUN-SEG-Easy (Unseen)

Camouflaged Object Detection

2 code implementations • CVPR 2020 • Deng-Ping Fan, Ge-Peng Ji, Guolei Sun, Ming-Ming Cheng, Jianbing Shen, Ling Shao

We present a comprehensive study on a new task named camouflaged object detection (COD), which aims to identify objects that are "seamlessly" embedded in their surroundings.

Ranked #10 on

Camouflaged Object Segmentation

on CAMO

Ranked #10 on

Camouflaged Object Segmentation

on CAMO

Taking a Deeper Look at Co-Salient Object Detection

1 code implementation • CVPR 2020 • Deng-Ping Fan, Zheng Lin, Ge-Peng Ji, Dingwen Zhang, Huazhu Fu, Ming-Ming Cheng

Co-salient object detection (CoSOD) is a newly emerging and rapidly growing branch of salient object detection (SOD), which aims to detect the co-occurring salient objects in multiple images.

Ranked #2 on

Co-Salient Object Detection

on iCoSeg

Ranked #2 on

Co-Salient Object Detection

on iCoSeg

Bilateral Attention Network for RGB-D Salient Object Detection

1 code implementation • 30 Apr 2020 • Zhao Zhang, Zheng Lin, Jun Xu, Wenda Jin, Shao-Ping Lu, Deng-Ping Fan

To better explore salient information in both foreground and background regions, this paper proposes a Bilateral Attention Network (BiANet) for the RGB-D SOD task.

Ranked #3 on

RGB-D Salient Object Detection

on RGBD135

Ranked #3 on

RGB-D Salient Object Detection

on RGBD135

Inf-Net: Automatic COVID-19 Lung Infection Segmentation from CT Images

3 code implementations • 22 Apr 2020 • Deng-Ping Fan, Tao Zhou, Ge-Peng Ji, Yi Zhou, Geng Chen, Huazhu Fu, Jianbing Shen, Ling Shao

Coronavirus Disease 2019 (COVID-19) spread globally in early 2020, causing the world to face an existential health crisis.

JL-DCF: Joint Learning and Densely-Cooperative Fusion Framework for RGB-D Salient Object Detection

1 code implementation • CVPR 2020 • Keren Fu, Deng-Ping Fan, Ge-Peng Ji, Qijun Zhao

This paper proposes a novel joint learning and densely-cooperative fusion (JL-DCF) architecture for RGB-D salient object detection.

Ranked #6 on

RGB-D Salient Object Detection

on NLPR

Ranked #6 on

RGB-D Salient Object Detection

on NLPR

JCS: An Explainable COVID-19 Diagnosis System by Joint Classification and Segmentation

1 code implementation • 15 Apr 2020 • Yu-Huan Wu, Shang-Hua Gao, Jie Mei, Jun Xu, Deng-Ping Fan, Rong-Guo Zhang, Ming-Ming Cheng

The chest CT scan test provides a valuable complementary tool to the RT-PCR test, and it can identify the patients in the early-stage with high sensitivity.

UC-Net: Uncertainty Inspired RGB-D Saliency Detection via Conditional Variational Autoencoders

1 code implementation • CVPR 2020 • Jing Zhang, Deng-Ping Fan, Yuchao Dai, Saeed Anwar, Fatemeh Sadat Saleh, Tong Zhang, Nick Barnes

In this paper, we propose the first framework (UCNet) to employ uncertainty for RGB-D saliency detection by learning from the data labeling process.

Ranked #4 on

RGB-D Salient Object Detection

on LFSD

Ranked #4 on

RGB-D Salient Object Detection

on LFSD

Scoot: A Perceptual Metric for Facial Sketches

1 code implementation • ICCV 2019 • Deng-Ping Fan, Shengchuan Zhang, Yu-Huan Wu, Yun Liu, Ming-Ming Cheng, Bo Ren, Paul L. Rosin, Rongrong Ji

In this paper, we design a perceptual metric, called Structure Co-Occurrence Texture (Scoot), which simultaneously considers the block-level spatial structure and co-occurrence texture statistics.

Rethinking RGB-D Salient Object Detection: Models, Data Sets, and Large-Scale Benchmarks

2 code implementations • 15 Jul 2019 • Deng-Ping Fan, Zheng Lin, Jia-Xing Zhao, Yun Liu, Zhao Zhang, Qibin Hou, Menglong Zhu, Ming-Ming Cheng

The use of RGB-D information for salient object detection has been extensively explored in recent years.

Ranked #4 on

RGB-D Salient Object Detection

on RGBD135

Ranked #4 on

RGB-D Salient Object Detection

on RGBD135

Shifting More Attention to Video Salient Object Detection

1 code implementation • CVPR 2019 • Deng-Ping Fan, Wenguan Wang, Ming-Ming Cheng, Jianbing Shen

This is the first work that explicitly emphasizes the challenge of saliency shift, i. e., the video salient object(s) may dynamically change.

Ranked #1 on

Video Salient Object Detection

on DAVSOD-Difficult20

Ranked #1 on

Video Salient Object Detection

on DAVSOD-Difficult20

LSANet: Feature Learning on Point Sets by Local Spatial Aware Layer

1 code implementation • 14 May 2019 • Lin-Zhuo Chen, Xuan-Yi Li, Deng-Ping Fan, Kai Wang, Shao-Ping Lu, Ming-Ming Cheng

We design a novel Local Spatial Aware (LSA) layer, which can learn to generate Spatial Distribution Weights (SDWs) hierarchically based on the spatial relationship in local region for spatial independent operations, to establish the relationship between these operations and spatial distribution, thus capturing the local geometric structure sensitively. We further propose the LSANet, which is based on LSA layer, aggregating the spatial information with associated features in each layer of the network better in network design. The experiments show that our LSANet can achieve on par or better performance than the state-of-the-art methods when evaluating on the challenging benchmark datasets.

Enhanced-alignment Measure for Binary Foreground Map Evaluation

2 code implementations • 26 May 2018 • Deng-Ping Fan, Cheng Gong, Yang Cao, Bo Ren, Ming-Ming Cheng, Ali Borji

The existing binary foreground map (FM) measures to address various types of errors in either pixel-wise or structural ways.

Semantic Edge Detection with Diverse Deep Supervision

1 code implementation • 9 Apr 2018 • Yun Liu, Ming-Ming Cheng, Deng-Ping Fan, Le Zhang, Jiawang Bian, DaCheng Tao

Semantic edge detection (SED), which aims at jointly extracting edges as well as their category information, has far-reaching applications in domains such as semantic segmentation, object proposal generation, and object recognition.

Face Sketch Synthesis Style Similarity:A New Structure Co-occurrence Texture Measure

1 code implementation • 9 Apr 2018 • Deng-Ping Fan, Shengchuan Zhang, Yu-Huan Wu, Ming-Ming Cheng, Bo Ren, Rongrong Ji, Paul L. Rosin

However, human perception of the similarity of two sketches will consider both structure and texture as essential factors and is not sensitive to slight ("pixel-level") mismatches.

Salient Objects in Clutter: Bringing Salient Object Detection to the Foreground

no code implementations • ECCV 2018 • Deng-Ping Fan, Ming-Ming Cheng, Jiang-Jiang Liu, Shang-Hua Gao, Qibin Hou, Ali Borji

Our analysis identifies a serious design bias of existing SOD datasets which assumes that each image contains at least one clearly outstanding salient object in low clutter.

Structure-measure: A New Way to Evaluate Foreground Maps

1 code implementation • ICCV 2017 • Deng-Ping Fan, Ming-Ming Cheng, Yun Liu, Tao Li, Ali Borji

Our new measure simultaneously evaluates region-aware and object-aware structural similarity between a SM and a GT map.