ShakeDrop Regularization for Deep Residual Learning

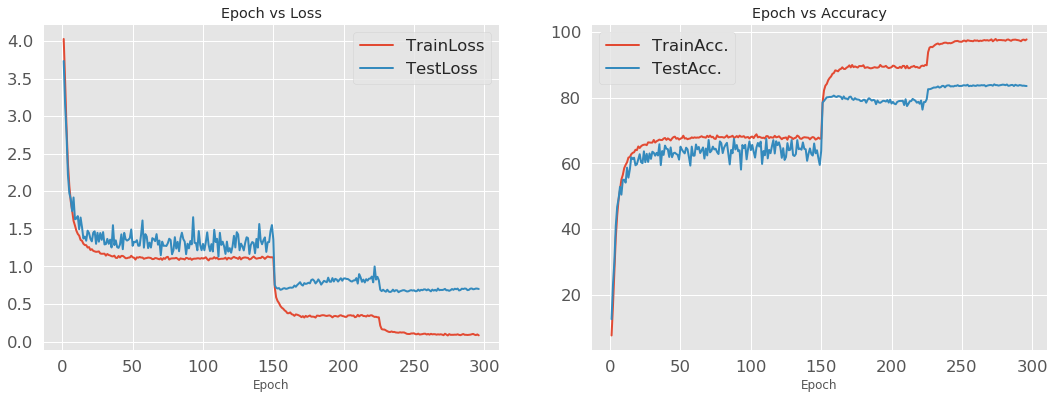

Overfitting is a crucial problem in deep neural networks, even in the latest network architectures. In this paper, to relieve the overfitting effect of ResNet and its improvements (i.e., Wide ResNet, PyramidNet, and ResNeXt), we propose a new regularization method called ShakeDrop regularization. ShakeDrop is inspired by Shake-Shake, which is an effective regularization method, but can be applied to ResNeXt only. ShakeDrop is more effective than Shake-Shake and can be applied not only to ResNeXt but also ResNet, Wide ResNet, and PyramidNet. An important key is to achieve stability of training. Because effective regularization often causes unstable training, we introduce a training stabilizer, which is an unusual use of an existing regularizer. Through experiments under various conditions, we demonstrate the conditions under which ShakeDrop works well.

PDF Abstract

CIFAR-10

CIFAR-10

ImageNet

ImageNet

MS COCO

MS COCO

CIFAR-100

CIFAR-100